Docs: add versioned docs for 1.10.1 (#14895)

diff --git a/1.10.1/docs/amoro.md b/1.10.1/docs/amoro.md new file mode 100644 index 0000000..f065d7e --- /dev/null +++ b/1.10.1/docs/amoro.md

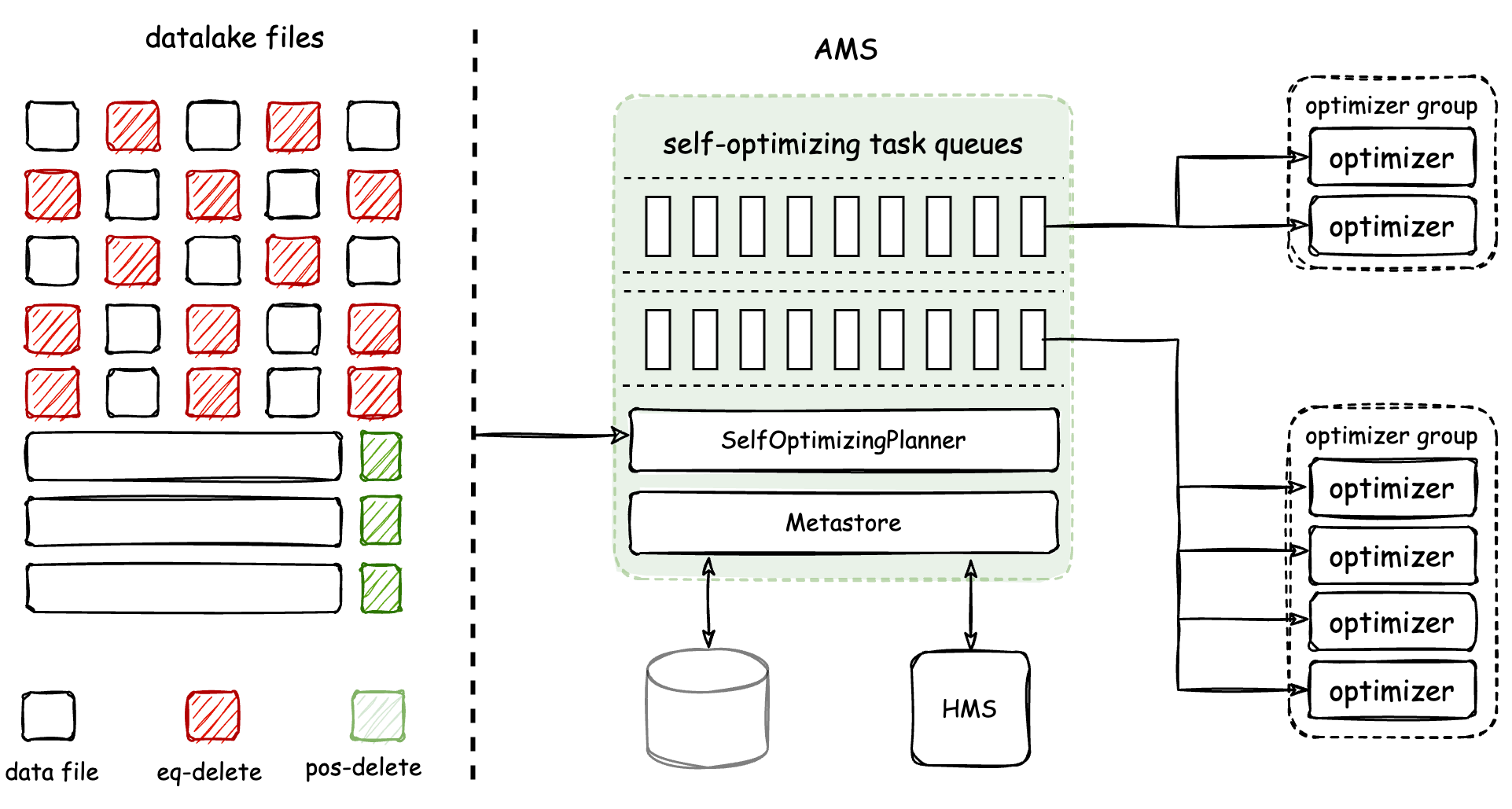

@@ -0,0 +1,69 @@ +--- +title: "Apache Amoro" +--- +<!-- + - Licensed to the Apache Software Foundation (ASF) under one or more + - contributor license agreements. See the NOTICE file distributed with + - this work for additional information regarding copyright ownership. + - The ASF licenses this file to You under the Apache License, Version 2.0 + - (the "License"); you may not use this file except in compliance with + - the License. You may obtain a copy of the License at + - + - http://www.apache.org/licenses/LICENSE-2.0 + - + - Unless required by applicable law or agreed to in writing, software + - distributed under the License is distributed on an "AS IS" BASIS, + - WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. + - See the License for the specific language governing permissions and + - limitations under the License. + --> + +# Apache Amoro With Iceberg + +**[Apache Amoro(incubating)](https://amoro.apache.org)** is a Lakehouse management system built on open data lake formats. Working with compute engines including Flink, Spark, and Trino, Amoro brings pluggable and +**[Table Maintenance](https://amoro.apache.org/docs/latest/self-optimizing/)** features for a Lakehouse to provide out-of-the-box data warehouse experience, and helps data platforms or products easily build infra-decoupled, stream-and-batch-fused and lake-native architecture. +**[AMS](https://amoro.apache.org/docs/latest/#architecture)(Amoro Management Service)** provides Lakehouse management features, like self-optimizing, data expiration, etc. It also provides a unified catalog service for all compute engines, which can also be combined with existing metadata services like HMS(Hive Metastore). + +## Auto Self-optimizing + +Amoro has introduced a Self-optimizing mechanism to +create an out-of-the-box Streaming Lakehouse management service that is as user-friendly as a traditional database or data warehouse. Self-optimizing involves various procedures such as file compaction, deduplication, and sorting. + +The architecture and working mechanism of Self-optimizing are shown in the figure below: + + + +The Optimizer is a component responsible for executing Self-optimizing tasks. It is a resident process managed by [AMS](https://amoro.apache.org/docs/latest/#architecture). AMS is responsible for +detecting and planning Self-optimizing tasks for tables, and then scheduling them to Optimizers for distributed execution in real-time. Finally, AMS +is responsible for submitting the optimizing results. Amoro achieves physical isolation of Optimizers through the Optimizer Group. + +The core features of [Amoro Self Optimizing](https://amoro.apache.org/docs/latest/self-optimizing/) are: + +- Automated, Asynchronous and Transparent — Continuous background detecting of file changes, asynchronous distributed execution of optimizing tasks, + transparent and imperceptible to users +- Resource Isolation and Sharing — Allow resources to be isolated and shared at the table level, as well as setting resource quotas +- Flexible and Scalable Deployment — Optimizers support various deployment methods and convenient scaling + +## Table Format + +Apache Amoro supports all catalog types supported by Iceberg, including common catalog: [REST](https://editor-next.swagger.io/?url=https://raw.githubusercontent.com/apache/iceberg/main/open-api/rest-catalog-open-api.yaml), Hadoop, Hive, Glue, JDBC, Nessie and other third-party catalog. +Amoro supports all storage types supported by Iceberg, including common store: Hadoop, S3, GCS, ECS, OSS, and so on. + +At the same time, we also provide a unique form based on Apache Iceberg, including mixed-Iceberg Format and mixed-Hive Format, so that you can quickly upgrade to the iceberg+hive Mixed table while compatible with the original Hive data + +### Iceberg Format + +Starting from Apache Amoro v0.4, Iceberg format including v1 and v2 is supported. Users only need to register Iceberg’s catalog in Amoro to host the table for Amoro maintenance. Amoro maintains the performance and economic availability of Iceberg tables with minimal read/write costs through means such as small file merging, eq-delete file conversion to pos-delete files, +duplicate data elimination, and file cleaning, and Amoro has no intrusive impact on the functionality of Iceberg. + +### Mixed-Iceberg Format + +[Mixed-Iceberg Format](https://amoro.apache.org/docs/latest/mixed-iceberg-format/) is similar to that of clustered indexes in databases. Each TableStore can use different table formats. Mixed-Iceberg format provides high freshness OLAP through merge-on-read between BaseStore and ChangeStore. To provide high-performance merge-on-read, BaseStore and ChangeStore use completely consistent partition and layout, and both support auto-bucket. + +- BaseStore — stores the stock data of the table, usually generated by batch computing or optimizing processes, and is more friendly to ReadStore for reading. +- ChangeStore — stores the flow and change data of the table, usually written in real-time by streaming computing, and can also be used for downstream CDC consumption, and is more friendly to WriteStore for writing. +- LogStore — serves as a cache layer for ChangeStore to accelerate stream processing. Amoro manages the consistency between LogStore and ChangeStore. + +### Mixed-Hive Format + +[Mixed-Hive](https://amoro.apache.org/docs/latest/mixed-hive-format/) format is a format that has better compatibility with Hive than Mixed-Iceberg format. Mixed-Hive format uses a Hive table as the BaseStore and an Iceberg table as the ChangeStore.

diff --git a/1.10.1/docs/api.md b/1.10.1/docs/api.md new file mode 100644 index 0000000..4dc16b4 --- /dev/null +++ b/1.10.1/docs/api.md

@@ -0,0 +1,261 @@ +--- +title: "Java API" +--- +<!-- + - Licensed to the Apache Software Foundation (ASF) under one or more + - contributor license agreements. See the NOTICE file distributed with + - this work for additional information regarding copyright ownership. + - The ASF licenses this file to You under the Apache License, Version 2.0 + - (the "License"); you may not use this file except in compliance with + - the License. You may obtain a copy of the License at + - + - http://www.apache.org/licenses/LICENSE-2.0 + - + - Unless required by applicable law or agreed to in writing, software + - distributed under the License is distributed on an "AS IS" BASIS, + - WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. + - See the License for the specific language governing permissions and + - limitations under the License. + --> + +# Iceberg Java API + +## Tables + +The main purpose of the Iceberg API is to manage table metadata, like schema, partition spec, metadata, and data files that store table data. + +Table metadata and operations are accessed through the `Table` interface. This interface will return table information. + +### Table metadata + +The [`Table` interface](../../javadoc/{{ icebergVersion }}/org/apache/iceberg/Table.html) provides access to the table metadata: + +* `schema` returns the current table [schema](schemas.md) +* `spec` returns the current table partition spec +* `properties` returns a map of key-value [properties](configuration.md) +* `currentSnapshot` returns the current table snapshot +* `snapshots` returns all valid snapshots for the table +* `snapshot(id)` returns a specific snapshot by ID +* `location` returns the table's base location + +Tables also provide `refresh` to update the table to the latest version, and expose helpers: + +* `io` returns the `FileIO` used to read and write table files +* `locationProvider` returns a `LocationProvider` used to create paths for data and metadata files + + +### Scanning + +#### File level + +Iceberg table scans start by creating a `TableScan` object with `newScan`. + +```java +TableScan scan = table.newScan(); +``` + +To configure a scan, call `filter` and `select` on the `TableScan` to get a new `TableScan` with those changes. + +```java +TableScan filteredScan = scan.filter(Expressions.equal("id", 5)) +``` + +Calls to configuration methods create a new `TableScan` so that each `TableScan` is immutable and won't change unexpectedly if shared across threads. + +When a scan is configured, `planFiles`, `planTasks`, and `schema` are used to return files, tasks, and the read projection. + +```java +TableScan scan = table.newScan() + .filter(Expressions.equal("id", 5)) + .select("id", "data"); + +Schema projection = scan.schema(); +Iterable<CombinedScanTask> tasks = scan.planTasks(); +``` + +Use `asOfTime` or `useSnapshot` to configure the table snapshot for time travel queries. + +#### Row level + +Iceberg table scans start by creating a `ScanBuilder` object with `IcebergGenerics.read`. + +```java +ScanBuilder scanBuilder = IcebergGenerics.read(table) +``` + +To configure a scan, call `where` and `select` on the `ScanBuilder` to get a new `ScanBuilder` with those changes. + +```java +scanBuilder.where(Expressions.equal("id", 5)) +``` + +When a scan is configured, call method `build` to execute scan. `build` return `CloseableIterable<Record>` + +```java +CloseableIterable<Record> result = IcebergGenerics.read(table) + .where(Expressions.lessThan("id", 5)) + .build(); +``` +where `Record` is Iceberg record for iceberg-data module `org.apache.iceberg.data.Record`. + +### Update operations + +`Table` also exposes operations that update the table. These operations use a builder pattern, [`PendingUpdate`](../../javadoc/{{ icebergVersion }}/org/apache/iceberg/PendingUpdate.html), that commits when `PendingUpdate#commit` is called. + +For example, updating the table schema is done by calling `updateSchema`, adding updates to the builder, and finally calling `commit` to commit the pending changes to the table: + +```java +table.updateSchema() + .addColumn("count", Types.LongType.get()) + .commit(); +``` + +Available operations to update a table are: + +* `updateSchema` -- update the table schema +* `updateSpec` -- modify a table's partition spec +* `updateStatistics` -- update statistics files of a table +* `updatePartitionStatistics` -- update statistics for a specific partition in table +* `updateProperties` -- update table properties +* `updateLocation` -- update the table's base location +* `expireSnapshots` -- used to remove old snapshots from table +* `manageSnapshots` -- used to manage table snapshots +* `newAppend` -- used to append data files +* `newFastAppend` -- used to append data files, will not compact metadata +* `newOverwrite` -- used to append data files and remove files that are overwritten +* `newDelete` -- used to delete data files +* `newRewrite` -- used to rewrite data files; will replace existing files with new versions +* `newRowDelta` -- used to remove or replace rows in existing data files +* `newTransaction` -- create a new table-level transaction +* `rewriteManifests` -- rewrite manifest data by clustering files, for faster scan planning +* `replaceSortOrder` -- for replacing table sort order with a newly created order +* `newReplacePartitions` -- used to dynamically overwrite partitions in the table with new data + +### Transactions + +Transactions are used to commit multiple table changes in a single atomic operation. A transaction is used to create individual operations using factory methods, like `newAppend`, just like working with a `Table`. Operations created by a transaction are committed as a group when `commitTransaction` is called. + +For example, deleting and appending a file in the same transaction: +```java +Transaction t = table.newTransaction(); + +// commit operations to the transaction +t.newDelete().deleteFromRowFilter(filter).commit(); +t.newAppend().appendFile(data).commit(); + +// commit all the changes to the table +t.commitTransaction(); +``` + +## Types + +Iceberg data types are located in the [`org.apache.iceberg.types` package](../../javadoc/{{ icebergVersion }}/org/apache/iceberg/types/package-summary.html). + +### Primitives + +Primitive type instances are available from static methods in each type class. Types without parameters use `get`, and types like `decimal` use factory methods: + +```java +Types.IntegerType.get() // int +Types.DoubleType.get() // double +Types.DecimalType.of(9, 2) // decimal(9, 2) +``` + +### Nested types + +Structs, maps, and lists are created using factory methods in type classes. + +Like struct fields, map keys or values and list elements are tracked as nested fields. Nested fields track [field IDs](evolution.md#correctness) and nullability. + +Struct fields are created using `NestedField.optional` or `NestedField.required`. Map value and list element nullability is set in the map and list factory methods. + +```java +// struct<1 id: int, 2 data: optional string> +StructType struct = Struct.of( + Types.NestedField.required(1, "id", Types.IntegerType.get()), + Types.NestedField.optional(2, "data", Types.StringType.get()) + ) +``` +```java +// map<1 key: int, 2 value: optional string> +MapType map = MapType.ofOptional( + 1, 2, + Types.IntegerType.get(), + Types.StringType.get() + ) +``` +```java +// array<1 element: int> +ListType list = ListType.ofRequired(1, IntegerType.get()); +``` + + +## Expressions + +Iceberg's expressions are used to configure table scans. To create expressions, use the factory methods in [`Expressions`](../../javadoc/{{ icebergVersion }}/org/apache/iceberg/expressions/Expressions.html). + +Supported predicate expressions are: + +* `isNull` +* `notNull` +* `equal` +* `notEqual` +* `lessThan` +* `lessThanOrEqual` +* `greaterThan` +* `greaterThanOrEqual` +* `in` +* `notIn` +* `startsWith` +* `notStartsWith` + +Supported expression operations are: + +* `and` +* `or` +* `not` + +Constant expressions are: + +* `alwaysTrue` +* `alwaysFalse` + +### Expression binding + +When created, expressions are unbound. Before an expression is used, it will be bound to a data type to find the field ID the expression name represents, and to convert predicate literals. + +For example, before using the expression `lessThan("x", 10)`, Iceberg needs to determine which column `"x"` refers to and convert `10` to that column's data type. + +If the expression could be bound to the type `struct<1 x: long, 2 y: long>` or to `struct<11 x: int, 12 y: int>`. + +### Expression example + +```java +table.newScan() + .filter(Expressions.greaterThanOrEqual("x", 5)) + .filter(Expressions.lessThan("x", 10)) +``` + + +## Modules + +Iceberg table support is organized in library modules: + +* `iceberg-common` contains utility classes used in other modules +* `iceberg-api` contains the public Iceberg API, including expressions, types, tables, and operations +* `iceberg-arrow` is an implementation of the Iceberg type system for reading and writing data stored in Iceberg tables using Apache Arrow as the in-memory data format +* `iceberg-aws` contains implementations of the Iceberg API to be used with tables stored on AWS S3 and/or for tables defined using the AWS Glue data catalog +* `iceberg-core` contains implementations of the Iceberg API and support for Avro data files, **this is what processing engines should depend on** +* `iceberg-parquet` is an optional module for working with tables backed by Parquet files +* `iceberg-orc` is an optional module for working with tables backed by ORC files (*experimental*) +* `iceberg-hive-metastore` is an implementation of Iceberg tables backed by the Hive metastore Thrift client + +This project Iceberg also has modules for adding Iceberg support to processing engines and associated tooling: + +* `iceberg-spark` is an implementation of Spark's Datasource V2 API for Iceberg with submodules for each spark versions (use runtime jars for a shaded version) +* `iceberg-flink` is an implementation of Flink's Table and DataStream API for Iceberg (use iceberg-flink-runtime for a shaded version) +* `iceberg-mr` is an implementation of MapReduce and Hive InputFormats and SerDes for Iceberg (use iceberg-hive-runtime for a shaded version for use with Hive) +* `iceberg-nessie` is a module used to integrate Iceberg table metadata history and operations with [Project Nessie](https://projectnessie.org/) +* `iceberg-data` is a client library used to read Iceberg tables from JVM applications +* `iceberg-runtime` generates a shaded runtime jar for Spark to integrate with iceberg tables +

diff --git a/1.10.1/docs/assets/images/audit-branch.png b/1.10.1/docs/assets/images/audit-branch.png new file mode 100644 index 0000000..3d6506a --- /dev/null +++ b/1.10.1/docs/assets/images/audit-branch.png Binary files differ

diff --git a/1.10.1/docs/assets/images/historical-snapshot-tag.png b/1.10.1/docs/assets/images/historical-snapshot-tag.png new file mode 100644 index 0000000..6a6be3d --- /dev/null +++ b/1.10.1/docs/assets/images/historical-snapshot-tag.png Binary files differ

diff --git a/1.10.1/docs/assets/images/iceberg-in-place-metadata-migration.png b/1.10.1/docs/assets/images/iceberg-in-place-metadata-migration.png new file mode 100644 index 0000000..1ede320 --- /dev/null +++ b/1.10.1/docs/assets/images/iceberg-in-place-metadata-migration.png Binary files differ

diff --git a/1.10.1/docs/assets/images/iceberg-migrateaction-step1.png b/1.10.1/docs/assets/images/iceberg-migrateaction-step1.png new file mode 100644 index 0000000..aae5166 --- /dev/null +++ b/1.10.1/docs/assets/images/iceberg-migrateaction-step1.png Binary files differ

diff --git a/1.10.1/docs/assets/images/iceberg-migrateaction-step2.png b/1.10.1/docs/assets/images/iceberg-migrateaction-step2.png new file mode 100644 index 0000000..13bb244 --- /dev/null +++ b/1.10.1/docs/assets/images/iceberg-migrateaction-step2.png Binary files differ

diff --git a/1.10.1/docs/assets/images/iceberg-migrateaction-step3.png b/1.10.1/docs/assets/images/iceberg-migrateaction-step3.png new file mode 100644 index 0000000..0175101 --- /dev/null +++ b/1.10.1/docs/assets/images/iceberg-migrateaction-step3.png Binary files differ

diff --git a/1.10.1/docs/assets/images/iceberg-snapshotaction-step1.png b/1.10.1/docs/assets/images/iceberg-snapshotaction-step1.png new file mode 100644 index 0000000..f66a3b2 --- /dev/null +++ b/1.10.1/docs/assets/images/iceberg-snapshotaction-step1.png Binary files differ

diff --git a/1.10.1/docs/assets/images/iceberg-snapshotaction-step2.png b/1.10.1/docs/assets/images/iceberg-snapshotaction-step2.png new file mode 100644 index 0000000..5e255ff --- /dev/null +++ b/1.10.1/docs/assets/images/iceberg-snapshotaction-step2.png Binary files differ

diff --git a/1.10.1/docs/assets/images/partition-spec-evolution.png b/1.10.1/docs/assets/images/partition-spec-evolution.png new file mode 100644 index 0000000..0bc595f --- /dev/null +++ b/1.10.1/docs/assets/images/partition-spec-evolution.png Binary files differ

diff --git a/1.10.1/docs/aws.md b/1.10.1/docs/aws.md new file mode 100644 index 0000000..b6123a6 --- /dev/null +++ b/1.10.1/docs/aws.md

@@ -0,0 +1,819 @@ +--- +title: "AWS" +--- +<!-- + - Licensed to the Apache Software Foundation (ASF) under one or more + - contributor license agreements. See the NOTICE file distributed with + - this work for additional information regarding copyright ownership. + - The ASF licenses this file to You under the Apache License, Version 2.0 + - (the "License"); you may not use this file except in compliance with + - the License. You may obtain a copy of the License at + - + - http://www.apache.org/licenses/LICENSE-2.0 + - + - Unless required by applicable law or agreed to in writing, software + - distributed under the License is distributed on an "AS IS" BASIS, + - WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. + - See the License for the specific language governing permissions and + - limitations under the License. + --> + +# Iceberg AWS Integrations + +Iceberg provides integration with different AWS services through the `iceberg-aws` module. +This section describes how to use Iceberg with AWS. + +## Enabling AWS Integration + +The `iceberg-aws` module is bundled with Spark and Flink engine runtimes for all versions from `0.11.0` onwards. +However, the AWS clients are not bundled so that you can use the same client version as your application. +You will need to provide the AWS v2 SDK because that is what Iceberg depends on. +You can choose to use the [AWS SDK bundle](https://mvnrepository.com/artifact/software.amazon.awssdk/bundle), +or individual AWS client packages (Glue, S3, DynamoDB, KMS, STS) if you would like to have a minimal dependency footprint. + +All the default AWS clients use the [Apache HTTP Client](https://mvnrepository.com/artifact/software.amazon.awssdk/apache-client) +for HTTP connection management. +This dependency is not part of the AWS SDK bundle and needs to be added separately. +To choose a different HTTP client library such as [URL Connection HTTP Client](https://mvnrepository.com/artifact/software.amazon.awssdk/url-connection-client), +see the section [client customization](#aws-client-customization) for more details. + +All the AWS module features can be loaded through custom catalog properties, +you can go to the documentations of each engine to see how to load a custom catalog. +Here are some examples. + +### Spark + +For example, to use AWS features with Spark 3.4 (with scala 2.12) and AWS clients (which is packaged in the `iceberg-aws-bundle`), you can start the Spark SQL shell with: + +```sh +# start Spark SQL client shell +spark-sql --packages org.apache.iceberg:iceberg-spark-runtime-3.4_2.12:{{ icebergVersion }},org.apache.iceberg:iceberg-aws-bundle:{{ icebergVersion }} \ + --conf spark.sql.defaultCatalog=my_catalog \ + --conf spark.sql.catalog.my_catalog=org.apache.iceberg.spark.SparkCatalog \ + --conf spark.sql.catalog.my_catalog.warehouse=s3://my-bucket/my/key/prefix \ + --conf spark.sql.catalog.my_catalog.type=glue \ + --conf spark.sql.catalog.my_catalog.io-impl=org.apache.iceberg.aws.s3.S3FileIO +``` + +As you can see, In the shell command, we use `--packages` to specify the additional `iceberg-aws-bundle` that contains all relevant AWS dependencies. + +### Flink + +To use AWS module with Flink, you can download the necessary dependencies and specify them when starting the Flink SQL client: + +```sh +# download Iceberg dependency +ICEBERG_VERSION={{ icebergVersion }} +MAVEN_URL=https://repo1.maven.org/maven2 +ICEBERG_MAVEN_URL=$MAVEN_URL/org/apache/iceberg + +wget $ICEBERG_MAVEN_URL/iceberg-flink-runtime/$ICEBERG_VERSION/iceberg-flink-runtime-$ICEBERG_VERSION.jar + +wget $ICEBERG_MAVEN_URL/iceberg-aws-bundle/$ICEBERG_VERSION/iceberg-aws-bundle-$ICEBERG_VERSION.jar + +# start Flink SQL client shell +/path/to/bin/sql-client.sh embedded \ + -j iceberg-flink-runtime-$ICEBERG_VERSION.jar \ + -j iceberg-aws-bundle-$ICEBERG_VERSION.jar \ + shell +``` + +With those dependencies, you can create a Flink catalog like the following: + +```sql +CREATE CATALOG my_catalog WITH ( + 'type'='iceberg', + 'warehouse'='s3://my-bucket/my/key/prefix', + 'catalog-type'='glue', + 'io-impl'='org.apache.iceberg.aws.s3.S3FileIO' +); +``` + +You can also specify the catalog configurations in `sql-client-defaults.yaml` to preload it: + +```yaml +catalogs: + - name: my_catalog + type: iceberg + warehouse: s3://my-bucket/my/key/prefix + catalog-type: glue + io-impl: org.apache.iceberg.aws.s3.S3FileIO +``` + +### Hive + +To use AWS module with Hive, you can download the necessary dependencies similar to the Flink example, +and then add them to the Hive classpath or add the jars at runtime in CLI: + +``` +add jar /my/path/to/iceberg-hive-runtime.jar; +add jar /my/path/to/aws/bundle.jar; +``` + +With those dependencies, you can register a Glue catalog and create external tables in Hive at runtime in CLI by: + +```sql +SET iceberg.engine.hive.enabled=true; +SET hive.vectorized.execution.enabled=false; +SET iceberg.catalog.glue.type=glue; +SET iceberg.catalog.glue.warehouse=s3://my-bucket/my/key/prefix; + +-- suppose you have an Iceberg table database_a.table_a created by GlueCatalog +CREATE EXTERNAL TABLE database_a.table_a +STORED BY 'org.apache.iceberg.mr.hive.HiveIcebergStorageHandler' +TBLPROPERTIES ('iceberg.catalog'='glue'); +``` + +You can also preload the catalog by setting the configurations above in `hive-site.xml`. + +## Catalogs + +There are multiple different options that users can choose to build an Iceberg catalog with AWS. + +### Glue Catalog + +Iceberg enables the use of [AWS Glue](https://aws.amazon.com/glue) as the `Catalog` implementation. +When used, an Iceberg namespace is stored as a [Glue Database](https://docs.aws.amazon.com/glue/latest/dg/aws-glue-api-catalog-databases.html), +an Iceberg table is stored as a [Glue Table](https://docs.aws.amazon.com/glue/latest/dg/aws-glue-api-catalog-tables.html), +and every Iceberg table version is stored as a [Glue TableVersion](https://docs.aws.amazon.com/glue/latest/dg/aws-glue-api-catalog-tables.html#aws-glue-api-catalog-tables-TableVersion). +You can start using Glue catalog by specifying the `catalog-impl` as `org.apache.iceberg.aws.glue.GlueCatalog` +or by setting `catalog-type` as `glue`, +just like what is shown in the [enabling AWS integration](#enabling-aws-integration) section above. +More details about loading the catalog can be found in individual engine pages, such as [Spark](spark-configuration.md#loading-a-custom-catalog) and [Flink](flink.md#creating-catalogs-and-using-catalogs). + +#### Glue Catalog ID + +There is a unique Glue metastore in each AWS account and each AWS region. +By default, `GlueCatalog` chooses the Glue metastore to use based on the user's default AWS client credential and region setup. +You can specify the Glue catalog ID through `glue.id` catalog property to point to a Glue catalog in a different AWS account. +The Glue catalog ID is your numeric AWS account ID. +If the Glue catalog is in a different region, you should configure your AWS client to point to the correct region, +see more details in [AWS client customization](#aws-client-customization). + +#### Skip Archive + +AWS Glue has the ability to archive older table versions and a user can roll back the table to any historical version if needed. +By default, the Iceberg Glue Catalog will skip the archival of older table versions. +If a user wishes to archive older table versions, they can set `glue.skip-archive` to false. +Do note for streaming ingestion into Iceberg tables, setting `glue.skip-archive` to false will quickly create a lot of Glue table versions. +For more details, please read [Glue Quotas](https://docs.aws.amazon.com/general/latest/gr/glue.html) and the [UpdateTable API](https://docs.aws.amazon.com/glue/latest/webapi/API_UpdateTable.html). + +#### Skip Name Validation + +Allow user to skip name validation for table name and namespaces. +It is recommended to stick to [Glue best practices](https://docs.aws.amazon.com/athena/latest/ug/glue-best-practices.html) +to make sure operations are Hive compatible. +This is only added for users that have existing conventions using non-standard characters. When database name +and table name validation are skipped, there is no guarantee that downstream systems would all support the names. + +#### Optimistic Locking + +By default, Iceberg uses Glue's optimistic locking for concurrent updates to a table. +With optimistic locking, each table has a version id. +If users retrieve the table metadata, Iceberg records the version id of that table. +Users can update the table as long as the version ID on the server side remains unchanged. +Version mismatch occurs if someone else modified the table before you did, causing an update failure. +Iceberg then refreshes metadata and checks if there is a conflict. +If there is no commit conflict, the operation will be retried. +Optimistic locking guarantees atomic transaction of Iceberg tables in Glue. +It also prevents others from accidentally overwriting your changes. + +!!! info + Please use AWS SDK version >= 2.17.131 to leverage Glue's Optimistic Locking. + If the AWS SDK version is below 2.17.131, only in-memory lock is used. To ensure atomic transaction, you need to set up a [DynamoDb Lock Manager](#dynamodb-lock-manager). + + +#### Warehouse Location + +Similar to all other catalog implementations, `warehouse` is a required catalog property to determine the root path of the data warehouse in storage. +By default, Glue only allows a warehouse location in S3 because of the use of `S3FileIO`. +To store data in a different local or cloud store, Glue catalog can switch to use `HadoopFileIO` or any custom FileIO by setting the `io-impl` catalog property. +Details about this feature can be found in the [custom FileIO](custom-catalog.md#custom-file-io-implementation) section. + +#### Table Location + +By default, the root location for a table `my_table` of namespace `my_ns` is at `my-warehouse-location/my-ns.db/my-table`. +This default root location can be changed at both namespace and table level. + +To use a different path prefix for all tables under a namespace, use AWS console or any AWS Glue client SDK you like to update the `locationUri` attribute of the corresponding Glue database. +For example, you can update the `locationUri` of `my_ns` to `s3://my-ns-bucket`, +then any newly created table will have a default root location under the new prefix. +For instance, a new table `my_table_2` will have its root location at `s3://my-ns-bucket/my_table_2`. + +To use a completely different root path for a specific table, set the `location` table property to the desired root path value you want. +For example, in Spark SQL you can do: + +```sql +CREATE TABLE my_catalog.my_ns.my_table ( + id bigint, + data string, + category string) +USING iceberg +OPTIONS ('location'='s3://my-special-table-bucket') +PARTITIONED BY (category); +``` + +For engines like Spark that support the `LOCATION` keyword, the above SQL statement is equivalent to: + +```sql +CREATE TABLE my_catalog.my_ns.my_table ( + id bigint, + data string, + category string) +USING iceberg +LOCATION 's3://my-special-table-bucket' +PARTITIONED BY (category); +``` + +### DynamoDB Catalog + +Iceberg supports using a [DynamoDB](https://aws.amazon.com/dynamodb) table to record and manage database and table information. + +#### Configurations + +The DynamoDB catalog supports the following configurations: + +| Property | Default | Description | +| --------------------------------- | -------------------------------------------------- | ------------------------------------------------------ | +| dynamodb.table-name | iceberg | name of the DynamoDB table used by DynamoDbCatalog | + + +#### Internal Table Design + +The DynamoDB table is designed with the following columns: + +| Column | Key | Type | Description | +| ----------------- | --------------- | ----------- |--------------------------------------------------------------------- | +| identifier | partition key | string | table identifier such as `db1.table1`, or string `NAMESPACE` for namespaces | +| namespace | sort key | string | namespace name. A [global secondary index (GSI)](https://docs.aws.amazon.com/amazondynamodb/latest/developerguide/GSI.html) is created with namespace as partition key, identifier as sort key, no other projected columns | +| v | | string | row version, used for optimistic locking | +| updated_at | | number | timestamp (millis) of the last update | +| created_at | | number | timestamp (millis) of the table creation | +| p.<property_key\> | | string | Iceberg-defined table properties including `table_type`, `metadata_location` and `previous_metadata_location` or namespace properties + +This design has the following benefits: + +1. it avoids potential [hot partition issue](https://aws.amazon.com/premiumsupport/knowledge-center/dynamodb-table-throttled/) if there are heavy write traffic to the tables within the same namespace because the partition key is at the table level +2. namespace operations are clustered in a single partition to avoid affecting table commit operations +3. a sort key to partition key reverse GSI is used for list table operation, and all other operations are single row ops or single partition query. No full table scan is needed for any operation in the catalog. +4. a string UUID version field `v` is used instead of `updated_at` to avoid 2 processes committing at the same millisecond +5. multi-row transaction is used for `catalog.renameTable` to ensure idempotency +6. properties are flattened as top level columns so that user can add custom GSI on any property field to customize the catalog. For example, users can store owner information as table property `owner`, and search tables by owner by adding a GSI on the `p.owner` column. + +### RDS JDBC Catalog + +Iceberg also supports the JDBC catalog which uses a table in a relational database to manage Iceberg tables. +You can configure to use the JDBC catalog with relational database services like [AWS RDS](https://aws.amazon.com/rds). +Read [the JDBC integration page](jdbc.md#jdbc-catalog) for guides and examples about using the JDBC catalog. +Read [this AWS documentation](https://docs.aws.amazon.com/AmazonRDS/latest/UserGuide/UsingWithRDS.IAMDBAuth.Connecting.Java.html) for more details about configuring the JDBC catalog with IAM authentication. + +### Which catalog to choose? + +With all the available options, we offer the following guidelines when choosing the right catalog to use for your application: + +1. if your organization has an existing Glue metastore or plans to use the AWS analytics ecosystem including Glue, [Athena](https://aws.amazon.com/athena), [EMR](https://aws.amazon.com/emr), [Redshift](https://aws.amazon.com/redshift) and [LakeFormation](https://aws.amazon.com/lake-formation), Glue catalog provides the easiest integration. +2. if your application requires frequent updates to table or high read and write throughput (e.g. streaming write), Glue and DynamoDB catalog provides the best performance through optimistic locking. +3. if you would like to enforce access control for tables in a catalog, Glue tables can be managed as an [IAM resource](https://docs.aws.amazon.com/service-authorization/latest/reference/list_awsglue.html), whereas DynamoDB catalog tables can only be managed through [item-level permission](https://docs.aws.amazon.com/amazondynamodb/latest/developerguide/specifying-conditions.html) which is much more complicated. +4. if you would like to query tables based on table property information without the need to scan the entire catalog, DynamoDB catalog allows you to build secondary indexes for any arbitrary property field and provide efficient query performance. +5. if you would like to have the benefit of DynamoDB catalog while also connect to Glue, you can enable [DynamoDB stream with Lambda trigger](https://docs.aws.amazon.com/amazondynamodb/latest/developerguide/Streams.Lambda.Tutorial.html) to asynchronously update your Glue metastore with table information in the DynamoDB catalog. +6. if your organization already maintains an existing relational database in RDS or uses [serverless Aurora](https://aws.amazon.com/rds/aurora/serverless/) to manage tables, the JDBC catalog provides the easiest integration. + +## DynamoDb Lock Manager + +[Amazon DynamoDB](https://aws.amazon.com/dynamodb) can be used by `HadoopCatalog` or `HadoopTables` so that for every commit, +the catalog first obtains a lock using a helper DynamoDB table and then try to safely modify the Iceberg table. +This is necessary for a file system-based catalog to ensure atomic transaction in storages like S3 that do not provide file write mutual exclusion. + +This feature requires the following lock related catalog properties: + +1. Set `lock-impl` as `org.apache.iceberg.aws.dynamodb.DynamoDbLockManager`. +2. Set `lock.table` as the DynamoDB table name you would like to use. If the lock table with the given name does not exist in DynamoDB, a new table is created with billing mode set as [pay-per-request](https://aws.amazon.com/blogs/aws/amazon-dynamodb-on-demand-no-capacity-planning-and-pay-per-request-pricing). + +Other lock related catalog properties can also be used to adjust locking behaviors such as heartbeat interval. +For more details, please refer to [Lock catalog properties](configuration.md#lock-catalog-properties). + + +## S3 FileIO + +Iceberg allows users to write data to S3 through `S3FileIO`. +`GlueCatalog` by default uses this `FileIO`, and other catalogs can load this `FileIO` using the `io-impl` catalog property. + +### Progressive Multipart Upload + +`S3FileIO` implements a customized progressive multipart upload algorithm to upload data. +Data files are uploaded by parts in parallel as soon as each part is ready, +and each file part is deleted as soon as its upload process completes. +This provides maximized upload speed and minimized local disk usage during uploads. +Here are the configurations that users can tune related to this feature: + +| Property | Default | Description | +| --------------------------------- | -------------------------------------------------- | ------------------------------------------------------ | +| s3.multipart.num-threads | the available number of processors in the system | number of threads to use for uploading parts to S3 (shared across all output streams) | +| s3.multipart.part-size-bytes | 32MB | the size of a single part for multipart upload requests | +| s3.multipart.threshold | 1.5 | the threshold expressed as a factor times the multipart size at which to switch from uploading using a single put object request to uploading using multipart upload | +| s3.staging-dir | `java.io.tmpdir` property value | the directory to hold temporary files | + +### S3 Server Side Encryption + +`S3FileIO` supports all 3 S3 server side encryption modes: + +* [SSE-S3](https://docs.aws.amazon.com/AmazonS3/latest/dev/UsingServerSideEncryption.html): When you use Server-Side Encryption with Amazon S3-Managed Keys (SSE-S3), each object is encrypted with a unique key. As an additional safeguard, it encrypts the key itself with a master key that it regularly rotates. Amazon S3 server-side encryption uses one of the strongest block ciphers available, 256-bit Advanced Encryption Standard (AES-256), to encrypt your data. +* [SSE-KMS](https://docs.aws.amazon.com/AmazonS3/latest/dev/UsingKMSEncryption.html): Server-Side Encryption with Customer Master Keys (CMKs) Stored in AWS Key Management Service (SSE-KMS) is similar to SSE-S3, but with some additional benefits and charges for using this service. There are separate permissions for the use of a CMK that provides added protection against unauthorized access of your objects in Amazon S3. SSE-KMS also provides you with an audit trail that shows when your CMK was used and by whom. Additionally, you can create and manage customer managed CMKs or use AWS managed CMKs that are unique to you, your service, and your Region. +* [DSSE-KMS](https://docs.aws.amazon.com/AmazonS3/latest/userguide/UsingDSSEncryption.html): Dual-layer Server-Side Encryption with AWS Key Management Service keys (DSSE-KMS) is similar to SSE-KMS, but applies two layers of encryption to objects when they are uploaded to Amazon S3. DSSE-KMS can be used to fulfill compliance standards that require you to apply multilayer encryption to your data and have full control of your encryption keys. +* [SSE-C](https://docs.aws.amazon.com/AmazonS3/latest/dev/ServerSideEncryptionCustomerKeys.html): With Server-Side Encryption with Customer-Provided Keys (SSE-C), you manage the encryption keys and Amazon S3 manages the encryption, as it writes to disks, and decryption when you access your objects. + +To enable server side encryption, use the following configuration properties: + +| Property | Default | Description | +| --------------------------------- | ---------------------------------------- | ------------------------------------------------------ | +| s3.sse.type | `none` | `none`, `s3`, `kms`, `dsse-kms` or `custom` | +| s3.sse.key | `aws/s3` for `kms` and `dsse-kms` types, null otherwise | A KMS Key ID or ARN for `kms` and `dsse-kms` types, or a custom base-64 AES256 symmetric key for `custom` type. | +| s3.sse.md5 | null | If SSE type is `custom`, this value must be set as the base-64 MD5 digest of the symmetric key to ensure integrity. | + +### S3 Access Control List + +`S3FileIO` supports S3 access control list (ACL) for detailed access control. +User can choose the ACL level by setting the `s3.acl` property. +For more details, please read [S3 ACL Documentation](https://docs.aws.amazon.com/AmazonS3/latest/dev/acl-overview.html). + +### Object Store File Layout + +S3 and many other cloud storage services [throttle requests based on object prefix](https://aws.amazon.com/premiumsupport/knowledge-center/s3-request-limit-avoid-throttling/). +Data stored in S3 with a traditional Hive storage layout can face S3 request throttling as objects are stored under the same file path prefix. + +Iceberg by default uses the Hive storage layout but can be switched to use the `ObjectStoreLocationProvider`. +With `ObjectStoreLocationProvider`, a deterministic hash is generated for each stored file, with the hash appended +directly after the `write.data.path`. This ensures files written to S3 are equally distributed across multiple +[prefixes](https://aws.amazon.com/premiumsupport/knowledge-center/s3-object-key-naming-pattern/) in the S3 bucket; +resulting in minimized throttling and maximized throughput for S3-related IO operations. When using `ObjectStoreLocationProvider` +having a shared `write.data.path` across your Iceberg tables will improve performance. + +For more information on how S3 scales API QPS, check out the 2018 re:Invent session on [Best Practices for Amazon S3 and Amazon S3 Glacier](https://youtu.be/rHeTn9pHNKo?t=3219). At [53:39](https://youtu.be/rHeTn9pHNKo?t=3219) it covers how S3 scales/partitions & at [54:50](https://youtu.be/rHeTn9pHNKo?t=3290) it discusses the 30-60 minute wait time before new partitions are created. + +To use the `ObjectStorageLocationProvider` add `'write.object-storage.enabled'=true` in the table's properties. +Below is an example Spark SQL command to create a table using the `ObjectStorageLocationProvider`: +```sql +CREATE TABLE my_catalog.my_ns.my_table ( + id bigint, + data string, + category string) +USING iceberg +OPTIONS ( + 'write.object-storage.enabled'=true, + 'write.data.path'='s3://my-table-data-bucket/my_table') +PARTITIONED BY (category); +``` + +We can then insert a single row into this new table +```SQL +INSERT INTO my_catalog.my_ns.my_table VALUES (1, "Pizza", "orders"); +``` + +Which will write the data to S3 with a 20-bit base2 hash (`01010110100110110010`) appended directly after the `write.object-storage.path`, +ensuring reads to the table are spread evenly across [S3 bucket prefixes](https://docs.aws.amazon.com/AmazonS3/latest/userguide/optimizing-performance.html), and improving performance. +Previously provided base64 hash was updated to base2 in order to provide an improved auto-scaling behavior on S3 General Purpose Buckets. + +As part of this update, we have also divided the entropy into multiple directories in order to improve the efficiency of the +orphan clean up process for Iceberg since directories are used as a mean to divide the work across workers for faster traversal. You +can see from the example below that we divide the hash to create 4-bit directories with a depth of 3 and attach the final part of the hash to +the end. +``` +s3://my-table-data-bucket/my_ns.db/my_table/0101/0110/1001/10110010/category=orders/00000-0-5affc076-96a4-48f2-9cd2-d5efbc9f0c94-00001.parquet +``` + +Note, the path resolution logic for `ObjectStoreLocationProvider` is `write.data.path` then `<tableLocation>/data`. + +However, for the older versions up to 0.12.0, the logic is as follows: + +- before 0.12.0, `write.object-storage.path` must be set. +- at 0.12.0, `write.object-storage.path` then `write.folder-storage.path` then `<tableLocation>/data`. +- at 2.0.0 `write.object-storage.path` and `write.folder-storage.path` will be removed + +For more details, please refer to the [LocationProvider Configuration](custom-catalog.md#custom-location-provider-implementation) section. + +We have also added a new table property `write.object-storage.partitioned-paths` that if set to false(default=true), this will +omit the partition values from the file path. Iceberg does not need these values in the file path and setting this value to false +can further reduce the key size. In this case, we also append the final 8 bit of entropy directly to the file name. +Inserted key would look like the following with this config set, note that `category=orders` is removed: +``` +s3://my-table-data-bucket/my_ns.db/my_table/1101/0100/1011/00111010-00000-0-5affc076-96a4-48f2-9cd2-d5efbc9f0c94-00001.parquet +``` + +### S3 Retries + +Workloads which encounter S3 throttling should persistently retry, with exponential backoff, to make progress while S3 +automatically scales. We provide the configurations below to adjust S3 retries for this purpose. For workloads that encounter +throttling and fail due to retry exhaustion, we recommend retry count to set 32 in order allow S3 to auto-scale. Note that +workloads with exceptionally high throughput against tables that S3 has not yet scaled, it may be necessary to increase the retry count further. + + +| Property | Default | Description | +|----------------------|---------|---------------------------------------------------------------------------------------| +| s3.retry.num-retries | 5 | Number of times to retry S3 operations. Recommended 32 for high-throughput workloads. | +| s3.retry.min-wait-ms | 2s | Minimum wait time to retry a S3 operation. | +| s3.retry.max-wait-ms | 20s | Maximum wait time to retry a S3 read operation. | + +### S3 Strong Consistency + +In November 2020, S3 announced [strong consistency](https://aws.amazon.com/s3/consistency/) for all read operations, and Iceberg is updated to fully leverage this feature. +There is no redundant consistency wait and check which might negatively impact performance during IO operations. + +### Hadoop S3A FileSystem + +Before `S3FileIO` was introduced, many Iceberg users choose to use `HadoopFileIO` to write data to S3 through the [S3A FileSystem](https://github.com/apache/hadoop/blob/trunk/hadoop-tools/hadoop-aws/src/main/java/org/apache/hadoop/fs/s3a/S3AFileSystem.java). +As introduced in the previous sections, `S3FileIO` adopts the latest AWS clients and S3 features for optimized security and performance + and is thus recommended for S3 use cases rather than the S3A FileSystem. + +`S3FileIO` writes data with `s3://` URI scheme, but it is also compatible with schemes written by the S3A FileSystem. +This means for any table manifests containing `s3a://` or `s3n://` file paths, `S3FileIO` is still able to read them. +This feature allows people to easily switch from S3A to `S3FileIO`. + +If for any reason you have to use S3A, here are the instructions: + +1. To store data using S3A, specify the `warehouse` catalog property to be an S3A path, e.g. `s3a://my-bucket/my-warehouse` +2. For `HiveCatalog`, to also store metadata using S3A, specify the Hadoop config property `hive.metastore.warehouse.dir` to be an S3A path. +3. Add [hadoop-aws](https://mvnrepository.com/artifact/org.apache.hadoop/hadoop-aws) as a runtime dependency of your compute engine. +4. Configure AWS settings based on [hadoop-aws documentation](https://hadoop.apache.org/docs/current/hadoop-aws/tools/hadoop-aws/index.html) (make sure you check the version, S3A configuration varies a lot based on the version you use). + +### S3 Write Checksum Verification + +To ensure integrity of uploaded objects, checksum validations for S3 writes can be turned on by setting catalog property `s3.checksum-enabled` to `true`. +This is turned off by default. + +### S3 Tags + +Custom [tags](https://docs.aws.amazon.com/AmazonS3/latest/userguide/object-tagging.html) can be added to S3 objects while writing and deleting. +For example, to write S3 tags with Spark 3.5, you can start the Spark SQL shell with: +``` +spark-sql --conf spark.sql.catalog.my_catalog=org.apache.iceberg.spark.SparkCatalog \ + --conf spark.sql.catalog.my_catalog.warehouse=s3://my-bucket/my/key/prefix \ + --conf spark.sql.catalog.my_catalog.type=glue \ + --conf spark.sql.catalog.my_catalog.io-impl=org.apache.iceberg.aws.s3.S3FileIO \ + --conf spark.sql.catalog.my_catalog.s3.write.tags.my_key1=my_val1 \ + --conf spark.sql.catalog.my_catalog.s3.write.tags.my_key2=my_val2 +``` +For the above example, the objects in S3 will be saved with tags: `my_key1=my_val1` and `my_key2=my_val2`. Do note that the specified write tags will be saved only while object creation. + +When the catalog property `s3.delete-enabled` is set to `false`, the objects are not hard-deleted from S3. +This is expected to be used in combination with S3 delete tagging, so objects are tagged and removed using [S3 lifecycle policy](https://docs.aws.amazon.com/AmazonS3/latest/userguide/object-lifecycle-mgmt.html). +The property is set to `true` by default. + +With the `s3.delete.tags` config, objects are tagged with the configured key-value pairs before deletion. +Users can configure tag-based object lifecycle policy at bucket level to transition objects to different tiers. +For example, to add S3 delete tags with Spark 3.5, you can start the Spark SQL shell with: + +``` +sh spark-sql --conf spark.sql.catalog.my_catalog=org.apache.iceberg.spark.SparkCatalog \ + --conf spark.sql.catalog.my_catalog.warehouse=s3://iceberg-warehouse/s3-tagging \ + --conf spark.sql.catalog.my_catalog.type=glue \ + --conf spark.sql.catalog.my_catalog.io-impl=org.apache.iceberg.aws.s3.S3FileIO \ + --conf spark.sql.catalog.my_catalog.s3.delete.tags.my_key3=my_val3 \ + --conf spark.sql.catalog.my_catalog.s3.delete-enabled=false +``` + +For the above example, the objects in S3 will be saved with tags: `my_key3=my_val3` before deletion. +Users can also use the catalog property `s3.delete.num-threads` to mention the number of threads to be used for adding delete tags to the S3 objects. + +When the catalog property `s3.write.table-tag-enabled` and `s3.write.namespace-tag-enabled` is set to `true` then the objects in S3 will be saved with tags: `iceberg.table=<table-name>` and `iceberg.namespace=<namespace-name>`. +Users can define access and data retention policy per namespace or table based on these tags. +For example, to write table and namespace name as S3 tags with Spark 3.5, you can start the Spark SQL shell with: +``` +sh spark-sql --conf spark.sql.catalog.my_catalog=org.apache.iceberg.spark.SparkCatalog \ + --conf spark.sql.catalog.my_catalog.warehouse=s3://iceberg-warehouse/s3-tagging \ + --conf spark.sql.catalog.my_catalog.type=glue \ + --conf spark.sql.catalog.my_catalog.io-impl=org.apache.iceberg.aws.s3.S3FileIO \ + --conf spark.sql.catalog.my_catalog.s3.write.table-tag-enabled=true \ + --conf spark.sql.catalog.my_catalog.s3.write.namespace-tag-enabled=true +``` +For more details on tag restrictions, please refer [User-Defined Tag Restrictions](https://docs.aws.amazon.com/AmazonS3/latest/userguide/tagging-managing.html). + +### S3 Access Points + +[Access Points](https://docs.aws.amazon.com/AmazonS3/latest/userguide/using-access-points.html) can be used to perform +S3 operations by specifying a mapping of bucket to access points. This is useful for multi-region access, cross-region access, +disaster recovery, etc. + +For using cross-region access points, we need to additionally set `use-arn-region-enabled` catalog property to +`true` to enable `S3FileIO` to make cross-region calls, it's not required for same / multi-region access points. + +For example, to use S3 access-point with Spark 3.5, you can start the Spark SQL shell with: +``` +spark-sql --conf spark.sql.catalog.my_catalog=org.apache.iceberg.spark.SparkCatalog \ + --conf spark.sql.catalog.my_catalog.warehouse=s3://my-bucket2/my/key/prefix \ + --conf spark.sql.catalog.my_catalog.type=glue \ + --conf spark.sql.catalog.my_catalog.io-impl=org.apache.iceberg.aws.s3.S3FileIO \ + --conf spark.sql.catalog.my_catalog.s3.use-arn-region-enabled=false \ + --conf spark.sql.catalog.my_catalog.s3.access-points.my-bucket1=arn:aws:s3::<ACCOUNT_ID>:accesspoint/<MRAP_ALIAS> \ + --conf spark.sql.catalog.my_catalog.s3.access-points.my-bucket2=arn:aws:s3::<ACCOUNT_ID>:accesspoint/<MRAP_ALIAS> +``` +For the above example, the objects in S3 on `my-bucket1` and `my-bucket2` buckets will use `arn:aws:s3::<ACCOUNT_ID>:accesspoint/<MRAP_ALIAS>` +access-point for all S3 operations. + +For more details on using access-points, please refer [Using access points with compatible Amazon S3 operations](https://docs.aws.amazon.com/AmazonS3/latest/userguide/access-points-usage-examples.html), [Sample notebook](https://github.com/aws-samples/quant-research/tree/main) . + +### S3 Access Grants + +[S3 Access Grants](https://aws.amazon.com/s3/features/access-grants/) can be used to grant accesses to S3 data using IAM Principals. +In order to enable S3 Access Grants to work in Iceberg, you can set the `s3.access-grants.enabled` catalog property to `true` after +you add the [S3 Access Grants Plugin jar](https://github.com/aws/aws-s3-accessgrants-plugin-java-v2) to your classpath. A link +to the Maven listing for this plugin can be found [here](https://mvnrepository.com/artifact/software.amazon.s3.accessgrants/aws-s3-accessgrants-java-plugin). + +In addition, we allow the [fallback-to-IAM configuration](https://github.com/aws/aws-s3-accessgrants-plugin-java-v2) which allows +you to fallback to using your IAM role (and its permission sets directly) to access your S3 data in the case the S3 Access Grants +is unable to authorize your S3 call. This can be done using the `s3.access-grants.fallback-to-iam` boolean catalog property. By default, +this property is set to `false`. + +For example, to add the S3 Access Grants Integration with Spark 3.5, you can start the Spark SQL shell with: +``` +spark-sql --conf spark.sql.catalog.my_catalog=org.apache.iceberg.spark.SparkCatalog \ + --conf spark.sql.catalog.my_catalog.warehouse=s3://my-bucket2/my/key/prefix \ + --conf spark.sql.catalog.my_catalog.catalog-impl=org.apache.iceberg.aws.glue.GlueCatalog \ + --conf spark.sql.catalog.my_catalog.io-impl=org.apache.iceberg.aws.s3.S3FileIO \ + --conf spark.sql.catalog.my_catalog.s3.access-grants.enabled=true \ + --conf spark.sql.catalog.my_catalog.s3.access-grants.fallback-to-iam=true +``` + +For more details on using S3 Access Grants, please refer to [Managing access with S3 Access Grants](https://docs.aws.amazon.com/AmazonS3/latest/userguide/access-grants.html). + +### S3 Cross-Region Access + +S3 Cross-Region bucket access can be turned on by setting catalog property `s3.cross-region-access-enabled` to `true`. +This is turned off by default to avoid first S3 API call increased latency. + +For example, to enable S3 Cross-Region bucket access with Spark 3.5, you can start the Spark SQL shell with: +``` +spark-sql --conf spark.sql.catalog.my_catalog=org.apache.iceberg.spark.SparkCatalog \ + --conf spark.sql.catalog.my_catalog.warehouse=s3://my-bucket2/my/key/prefix \ + --conf spark.sql.catalog.my_catalog.type=glue \ + --conf spark.sql.catalog.my_catalog.io-impl=org.apache.iceberg.aws.s3.S3FileIO \ + --conf spark.sql.catalog.my_catalog.s3.cross-region-access-enabled=true +``` + +For more details, please refer to [Cross-Region access for Amazon S3](https://docs.aws.amazon.com/sdk-for-java/latest/developer-guide/s3-cross-region.html). + +### S3 Acceleration + +[S3 Acceleration](https://aws.amazon.com/s3/transfer-acceleration/) can be used to speed up transfers to and from Amazon S3 by as much as 50-500% for long-distance transfer of larger objects. + +To use S3 Acceleration, we need to set `s3.acceleration-enabled` catalog property to `true` to enable `S3FileIO` to make accelerated S3 calls. + +For example, to use S3 Acceleration with Spark 3.5, you can start the Spark SQL shell with: +``` +spark-sql --conf spark.sql.catalog.my_catalog=org.apache.iceberg.spark.SparkCatalog \ + --conf spark.sql.catalog.my_catalog.warehouse=s3://my-bucket2/my/key/prefix \ + --conf spark.sql.catalog.my_catalog.type=glue \ + --conf spark.sql.catalog.my_catalog.io-impl=org.apache.iceberg.aws.s3.S3FileIO \ + --conf spark.sql.catalog.my_catalog.s3.acceleration-enabled=true +``` + +For more details on using S3 Acceleration, please refer to [Configuring fast, secure file transfers using Amazon S3 Transfer Acceleration](https://docs.aws.amazon.com/AmazonS3/latest/userguide/transfer-acceleration.html). + +### S3 Analytics Accelerator + +The [Analytics Accelerator Library for Amazon S3](https://github.com/awslabs/analytics-accelerator-s3) helps you accelerate access to Amazon S3 data from your applications. This open-source solution reduces processing times and compute costs for your data analytics workloads. + +In order to enable S3 Analytics Accelerator Library to work in Iceberg, you can set the `s3.analytics-accelerator.enabled` catalog property to `true`. By default, this property is set to `false`. + +For example, to use S3 Analytics Accelerator with Spark, you can start the Spark SQL shell with: +``` +spark-sql --conf spark.sql.catalog.my_catalog=org.apache.iceberg.spark.SparkCatalog \ + --conf spark.sql.catalog.my_catalog.warehouse=s3://my-bucket2/my/key/prefix \ + --conf spark.sql.catalog.my_catalog.type=glue \ + --conf spark.sql.catalog.my_catalog.io-impl=org.apache.iceberg.aws.s3.S3FileIO \ + --conf spark.sql.catalog.my_catalog.s3.analytics-accelerator.enabled=true +``` + +The Analytics Accelerator Library can work with either the [S3 CRT client](https://docs.aws.amazon.com/sdk-for-java/latest/developer-guide/crt-based-s3-client.html) or the [S3AsyncClient](https://sdk.amazonaws.com/java/api/latest/software/amazon/awssdk/services/s3/S3AsyncClient.html). The library recommends that you use the S3 CRT client due to its enhanced connection pool management and [higher throughput on downloads](https://aws.amazon.com/blogs/developer/introducing-crt-based-s3-client-and-the-s3-transfer-manager-in-the-aws-sdk-for-java-2-x/). + +#### Client Configuration + +| Property | Default | Description | +|------------------------|---------|--------------------------------------------------------------| +| s3.crt.enabled | `true` | Controls if the S3 Async clients should be created using CRT | +| s3.crt.max-concurrency | `500` | Max concurrency for S3 CRT clients | + +Additional library specific configurations are organized into the following sections: + +#### Logical IO Configuration + +| Property | Default | Description | +|------------------------------------------------------------------------|-----------------------|----------------------------------------------------------------------------| +| s3.analytics-accelerator.logicalio.prefetch.footer.enabled | `true` | Controls whether footer prefetching is enabled | +| s3.analytics-accelerator.logicalio.prefetch.page.index.enabled | `true` | Controls whether page index prefetching is enabled | +| s3.analytics-accelerator.logicalio.prefetch.file.metadata.size | `32KB` | Size of metadata to prefetch for regular files | +| s3.analytics-accelerator.logicalio.prefetch.large.file.metadata.size | `1MB` | Size of metadata to prefetch for large files | +| s3.analytics-accelerator.logicalio.prefetch.file.page.index.size | `1MB` | Size of page index to prefetch for regular files | +| s3.analytics-accelerator.logicalio.prefetch.large.file.page.index.size | `8MB` | Size of page index to prefetch for large files | +| s3.analytics-accelerator.logicalio.large.file.size | `1GB` | Threshold to consider a file as large | +| s3.analytics-accelerator.logicalio.small.objects.prefetching.enabled | `true` | Controls prefetching for small objects | +| s3.analytics-accelerator.logicalio.small.object.size.threshold | `3MB` | Size threshold for small object prefetching | +| s3.analytics-accelerator.logicalio.parquet.metadata.store.size | `45` | Size of the parquet metadata store | +| s3.analytics-accelerator.logicalio.max.column.access.store.size | `15` | Maximum size of column access store | +| s3.analytics-accelerator.logicalio.parquet.format.selector.regex | `^.*.(parquet\|par)$` | Regex pattern to identify parquet files | +| s3.analytics-accelerator.logicalio.prefetching.mode | `ROW_GROUP` | Prefetching mode (valid values: `OFF`, `ALL`, `ROW_GROUP`, `COLUMN_BOUND`) | + +#### Physical IO Configuration + +| Property | Default | Description | +|--------------------------------------------------------------|---------|---------------------------------------------| +| s3.analytics-accelerator.physicalio.metadatastore.capacity | `50` | Capacity of the metadata store | +| s3.analytics-accelerator.physicalio.blocksizebytes | `8MB` | Size of blocks for data transfer | +| s3.analytics-accelerator.physicalio.readaheadbytes | `64KB` | Number of bytes to read ahead | +| s3.analytics-accelerator.physicalio.maxrangesizebytes | `8MB` | Maximum size of range requests | +| s3.analytics-accelerator.physicalio.partsizebytes | `8MB` | Size of individual parts for transfer | +| s3.analytics-accelerator.physicalio.sequentialprefetch.base | `2.0` | Base factor for sequential prefetch sizing | +| s3.analytics-accelerator.physicalio.sequentialprefetch.speed | `1.0` | Speed factor for sequential prefetch growth | + +#### Telemetry Configuration + +| Property | Default | Description | +|------------------------------------------------------------------------|-------------------------------------|--------------------------------------------------------------------------| +| s3.analytics-accelerator.telemetry.level | `STANDARD` | Telemetry detail level (valid values: `CRITICAL`, `STANDARD`, `VERBOSE`) | +| s3.analytics-accelerator.telemetry.std.out.enabled | `false` | Enable stdout telemetry output | +| s3.analytics-accelerator.telemetry.logging.enabled | `true` | Enable logging telemetry output | +| s3.analytics-accelerator.telemetry.aggregations.enabled | `false` | Enable telemetry aggregations | +| s3.analytics-accelerator.telemetry.aggregations.flush.interval.seconds | `-1` | Interval to flush aggregated telemetry | +| s3.analytics-accelerator.telemetry.logging.level | `INFO` | Log level for telemetry | +| s3.analytics-accelerator.telemetry.logging.name | `com.amazon.connector.s3.telemetry` | Logger name for telemetry | +| s3.analytics-accelerator.telemetry.format | `default` | Telemetry output format (valid values: `json`, `default`) | + +#### Object Client Configuration + +| Property | Default | Description | +|------------------------------------------|---------|----------------------------------------------------------------| +| s3.analytics-accelerator.useragentprefix | `null` | Custom prefix to add to the `User-Agent` string in S3 requests | + +### S3 Dual-stack + +[S3 Dual-stack](https://docs.aws.amazon.com/AmazonS3/latest/userguide/dual-stack-endpoints.html) allows a client to access an S3 bucket through a dual-stack endpoint. +When clients request a dual-stack endpoint, the bucket URL resolves to an IPv6 address if possible, otherwise fallback to IPv4. + +To use S3 Dual-stack, we need to set `s3.dualstack-enabled` catalog property to `true` to enable `S3FileIO` to make dual-stack S3 calls. + +For example, to use S3 Dual-stack with Spark 3.5, you can start the Spark SQL shell with: +``` +spark-sql --conf spark.sql.catalog.my_catalog=org.apache.iceberg.spark.SparkCatalog \ + --conf spark.sql.catalog.my_catalog.warehouse=s3://my-bucket2/my/key/prefix \ + --conf spark.sql.catalog.my_catalog.type=glue \ + --conf spark.sql.catalog.my_catalog.io-impl=org.apache.iceberg.aws.s3.S3FileIO \ + --conf spark.sql.catalog.my_catalog.s3.dualstack-enabled=true +``` + +For more details on using S3 Dual-stack, please refer [Using dual-stack endpoints from the AWS CLI and the AWS SDKs](https://docs.aws.amazon.com/AmazonS3/latest/userguide/dual-stack-endpoints.html#dual-stack-endpoints-cli) + +## AWS Client Customization + +Many organizations have customized their way of configuring AWS clients with their own credential provider, access proxy, retry strategy, etc. +Iceberg allows users to plug in their own implementation of `org.apache.iceberg.aws.AwsClientFactory` by setting the `client.factory` catalog property. + +### Cross-Account and Cross-Region Access + +It is a common use case for organizations to have a centralized AWS account for Glue metastore and S3 buckets, and use different AWS accounts and regions for different teams to access those resources. +In this case, a [cross-account IAM role](https://docs.aws.amazon.com/IAM/latest/UserGuide/id_roles_use.html) is needed to access those centralized resources. +Iceberg provides an AWS client factory `AssumeRoleAwsClientFactory` to support this common use case. +This also serves as an example for users who would like to implement their own AWS client factory. + +This client factory has the following configurable catalog properties: + +| Property | Default | Description | +| --------------------------------- | ---------------------------------------- | ------------------------------------------------------ | +| client.assume-role.arn | null, requires user input | ARN of the role to assume, e.g. arn:aws:iam::123456789:role/myRoleToAssume | +| client.assume-role.region | null, requires user input | All AWS clients except the STS client will use the given region instead of the default region chain | +| client.assume-role.external-id | null | An optional [external ID](https://docs.aws.amazon.com/IAM/latest/UserGuide/id_roles_create_for-user_externalid.html) | +| client.assume-role.timeout-sec | 1 hour | Timeout of each assume role session. At the end of the timeout, a new set of role session credentials will be fetched through an STS client. | + +By using this client factory, an STS client is initialized with the default credential and region to assume the specified role. +The Glue, S3 and DynamoDB clients are then initialized with the assume-role credential and region to access resources. +Here is an example to start Spark shell with this client factory: + +```shell +spark-sql --packages org.apache.iceberg:iceberg-spark-runtime-3.4_2.12:{{ icebergVersion }},org.apache.iceberg:iceberg-aws-bundle:{{ icebergVersion }} \ + --conf spark.sql.catalog.my_catalog=org.apache.iceberg.spark.SparkCatalog \ + --conf spark.sql.catalog.my_catalog.warehouse=s3://my-bucket/my/key/prefix \ + --conf spark.sql.catalog.my_catalog.type=glue \ + --conf spark.sql.catalog.my_catalog.client.factory=org.apache.iceberg.aws.AssumeRoleAwsClientFactory \ + --conf spark.sql.catalog.my_catalog.client.assume-role.arn=arn:aws:iam::123456789:role/myRoleToAssume \ + --conf spark.sql.catalog.my_catalog.client.assume-role.region=ap-northeast-1 +``` + +### HTTP Client Configurations +AWS clients support two types of HTTP Client, [URL Connection HTTP Client](https://mvnrepository.com/artifact/software.amazon.awssdk/url-connection-client) +and [Apache HTTP Client](https://mvnrepository.com/artifact/software.amazon.awssdk/apache-client). +By default, AWS clients use **Apache** HTTP Client to communicate with the service. +This HTTP client supports various functionalities and customized settings, such as expect-continue handshake and TCP KeepAlive, at the cost of extra dependency and additional startup latency. +In contrast, URL Connection HTTP Client optimizes for minimum dependencies and startup latency but supports less functionality than other implementations. + +For more details of configuration, see sections [URL Connection HTTP Client Configurations](#url-connection-http-client-configurations) and [Apache HTTP Client Configurations](#apache-http-client-configurations). + +Configurations for the HTTP client can be set via catalog properties. Below is an overview of available configurations: + +| Property | Default | Description | +|----------------------------|---------|------------------------------------------------------------------------------------------------------------| +| http-client.type | apache | Types of HTTP Client. <br/> `urlconnection`: URL Connection HTTP Client <br/> `apache`: Apache HTTP Client | +| http-client.proxy-endpoint | null | An optional proxy endpoint to use for the HTTP client. | + +#### URL Connection HTTP Client Configurations + +URL Connection HTTP Client has the following configurable properties: + +| Property | Default | Description | +|-------------------------------------------------|---------|------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------| +| http-client.urlconnection.socket-timeout-ms | null | An optional [socket timeout](https://sdk.amazonaws.com/java/api/latest/software/amazon/awssdk/http/urlconnection/UrlConnectionHttpClient.Builder.html#socketTimeout(java.time.Duration)) in milliseconds | +| http-client.urlconnection.connection-timeout-ms | null | An optional [connection timeout](https://sdk.amazonaws.com/java/api/latest/software/amazon/awssdk/http/urlconnection/UrlConnectionHttpClient.Builder.html#connectionTimeout(java.time.Duration)) in milliseconds | + +Users can use catalog properties to override the defaults. For example, to configure the socket timeout for URL Connection HTTP Client when starting a spark shell, one can add: +```shell +--conf spark.sql.catalog.my_catalog.http-client.urlconnection.socket-timeout-ms=80 +``` + +#### Apache HTTP Client Configurations + +Apache HTTP Client has the following configurable properties: + +| Property | Default | Description | +|-------------------------------------------------------|---------------------------|---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------| +| http-client.apache.socket-timeout-ms | null | An optional [socket timeout](https://sdk.amazonaws.com/java/api/latest/software/amazon/awssdk/http/apache/ApacheHttpClient.Builder.html#socketTimeout(java.time.Duration)) in milliseconds | +| http-client.apache.connection-timeout-ms | null | An optional [connection timeout](https://sdk.amazonaws.com/java/api/latest/software/amazon/awssdk/http/apache/ApacheHttpClient.Builder.html#connectionTimeout(java.time.Duration)) in milliseconds | +| http-client.apache.connection-acquisition-timeout-ms | null | An optional [connection acquisition timeout](https://sdk.amazonaws.com/java/api/latest/software/amazon/awssdk/http/apache/ApacheHttpClient.Builder.html#connectionAcquisitionTimeout(java.time.Duration)) in milliseconds | +| http-client.apache.connection-max-idle-time-ms | null | An optional [connection max idle timeout](https://sdk.amazonaws.com/java/api/latest/software/amazon/awssdk/http/apache/ApacheHttpClient.Builder.html#connectionMaxIdleTime(java.time.Duration)) in milliseconds | +| http-client.apache.connection-time-to-live-ms | null | An optional [connection time to live](https://sdk.amazonaws.com/java/api/latest/software/amazon/awssdk/http/apache/ApacheHttpClient.Builder.html#connectionTimeToLive(java.time.Duration)) in milliseconds | +| http-client.apache.expect-continue-enabled | null, disabled by default | An optional `true/false` setting that controls whether [expect continue](https://sdk.amazonaws.com/java/api/latest/software/amazon/awssdk/http/apache/ApacheHttpClient.Builder.html#expectContinueEnabled(java.lang.Boolean)) is enabled | +| http-client.apache.max-connections | null | An optional [max connections](https://sdk.amazonaws.com/java/api/latest/software/amazon/awssdk/http/apache/ApacheHttpClient.Builder.html#maxConnections(java.lang.Integer)) in integer | +| http-client.apache.tcp-keep-alive-enabled | null, disabled by default | An optional `true/false` setting that controls whether [tcp keep alive](https://sdk.amazonaws.com/java/api/latest/software/amazon/awssdk/http/apache/ApacheHttpClient.Builder.html#tcpKeepAlive(java.lang.Boolean)) is enabled | +| http-client.apache.use-idle-connection-reaper-enabled | null, enabled by default | An optional `true/false` setting that controls whether [use idle connection reaper](https://sdk.amazonaws.com/java/api/latest/software/amazon/awssdk/http/apache/ApacheHttpClient.Builder.html#useIdleConnectionReaper(java.lang.Boolean)) is used | + +Users can use catalog properties to override the defaults. For example, to configure the max connections for Apache HTTP Client when starting a spark shell, one can add: +```shell +--conf spark.sql.catalog.my_catalog.http-client.apache.max-connections=5 +``` + +## Run Iceberg on AWS + +### Amazon Athena + +[Amazon Athena](https://aws.amazon.com/athena/) provides a serverless query engine that could be used to perform read, write, update and optimization tasks against Iceberg tables. +More details could be found [here](https://docs.aws.amazon.com/athena/latest/ug/querying-iceberg.html). + +### Amazon EMR + +[Amazon EMR](https://aws.amazon.com/emr/) can provision clusters with [Spark](https://docs.aws.amazon.com/emr/latest/ReleaseGuide/emr-spark.html) (EMR 6 for Spark 3, EMR 5 for Spark 2), +[Hive](https://docs.aws.amazon.com/emr/latest/ReleaseGuide/emr-hive.html), [Flink](https://docs.aws.amazon.com/emr/latest/ReleaseGuide/emr-flink.html), +[Trino](https://docs.aws.amazon.com/emr/latest/ReleaseGuide/emr-presto.html) that can run Iceberg. + +Starting with EMR version 6.5.0, EMR clusters can be configured to have the necessary Apache Iceberg dependencies installed without requiring bootstrap actions. +Please refer to the [official documentation](https://docs.aws.amazon.com/emr/latest/ReleaseGuide/emr-iceberg-use-cluster.html) on how to create a cluster with Iceberg installed. + +For versions before 6.5.0, you can use a [bootstrap action](https://docs.aws.amazon.com/emr/latest/ManagementGuide/emr-plan-bootstrap.html) similar to the following to pre-install all necessary dependencies: +```sh +#!/bin/bash + +ICEBERG_VERSION={{ icebergVersion }} +MAVEN_URL=https://repo1.maven.org/maven2 +ICEBERG_MAVEN_URL=$MAVEN_URL/org/apache/iceberg +# NOTE: this is just an example shared class path between Spark and Flink, +# please choose a proper class path for production. +LIB_PATH=/usr/share/aws/aws-java-sdk/ + + +ICEBERG_PACKAGES=( + "iceberg-spark-runtime-3.5_2.12" + "iceberg-flink-runtime" + "iceberg-aws-bundle" +) + +install_dependencies () { + install_path=$1 + download_url=$2 + version=$3 + shift + pkgs=("$@") + for pkg in "${pkgs[@]}"; do + sudo wget -P $install_path $download_url/$pkg/$version/$pkg-$version.jar + done +} + +install_dependencies $LIB_PATH $ICEBERG_MAVEN_URL $ICEBERG_VERSION "${ICEBERG_PACKAGES[@]}" +``` + +### AWS Glue + +[AWS Glue](https://aws.amazon.com/glue/) provides a serverless data integration service +that could be used to perform read, write and update tasks against Iceberg tables. +More details could be found [here](https://docs.aws.amazon.com/glue/latest/dg/aws-glue-programming-etl-format-iceberg.html). + + +### AWS EKS + +[AWS Elastic Kubernetes Service (EKS)](https://aws.amazon.com/eks/) can be used to start any Spark, Flink, Hive, Presto or Trino clusters to work with Iceberg. +Search the [Iceberg blogs](../../blogs.md) page for tutorials around running Iceberg with Docker and Kubernetes. + +### Amazon Kinesis + +[Amazon Kinesis Data Analytics](https://aws.amazon.com/about-aws/whats-new/2019/11/you-can-now-run-fully-managed-apache-flink-applications-with-apache-kafka/) provides a platform +to run fully managed Apache Flink applications. You can include Iceberg in your application Jar and run it in the platform. + +### AWS Redshift +[AWS Redshift Spectrum or Redshift Serverless](https://docs.aws.amazon.com/redshift/latest/dg/querying-iceberg.html) supports querying Apache Iceberg tables cataloged in the AWS Glue Data Catalog. + +### Amazon Data Firehose +You can use [Firehose](https://docs.aws.amazon.com/firehose/latest/dev/apache-iceberg-destination.html) to directly deliver streaming data to Apache Iceberg Tables in Amazon S3. With this feature, you can route records from a single stream into different Apache Iceberg Tables, and automatically apply insert, update, and delete operations to records in the Apache Iceberg Tables. This feature requires using the AWS Glue Data Catalog. \ No newline at end of file

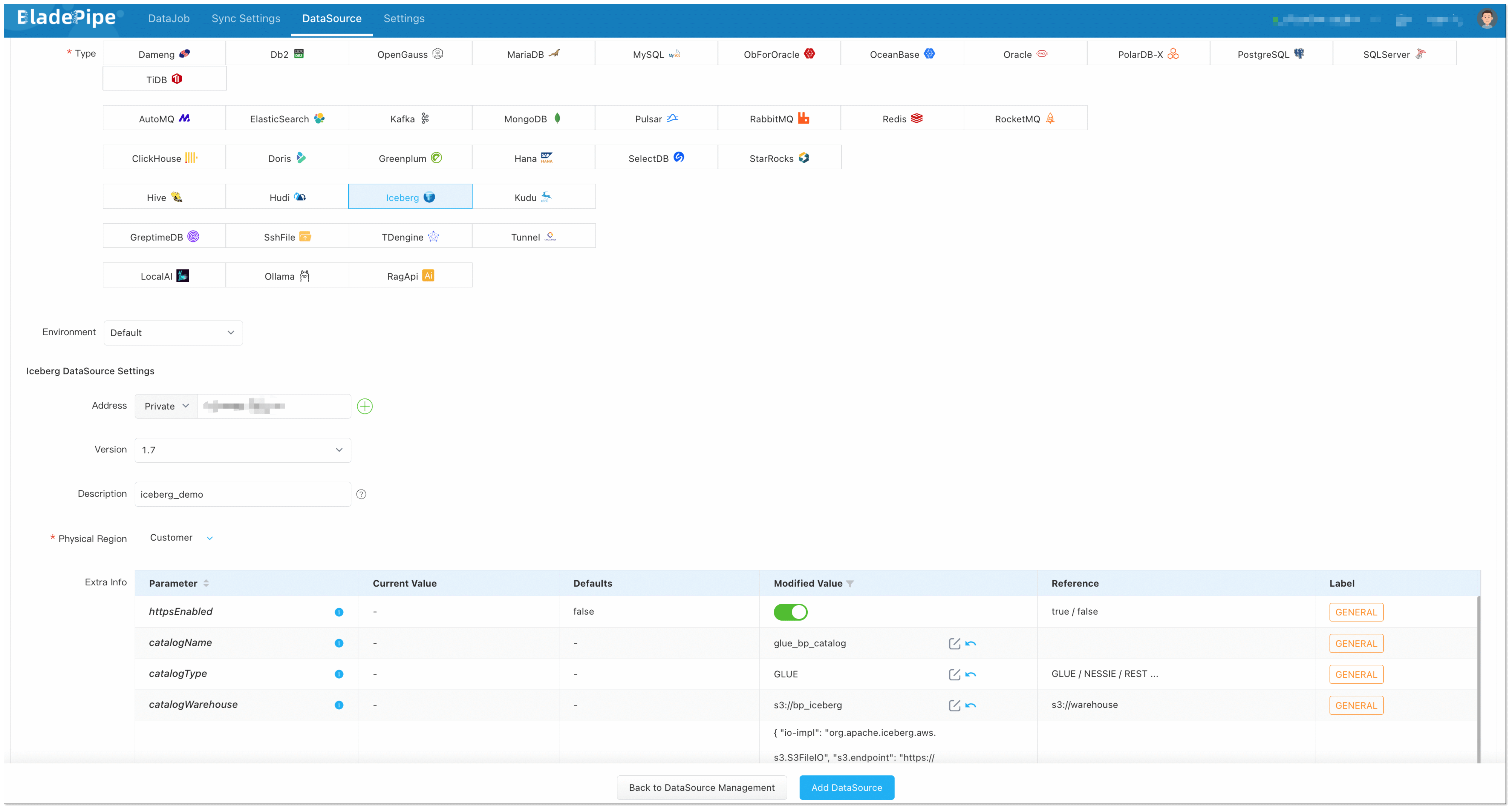

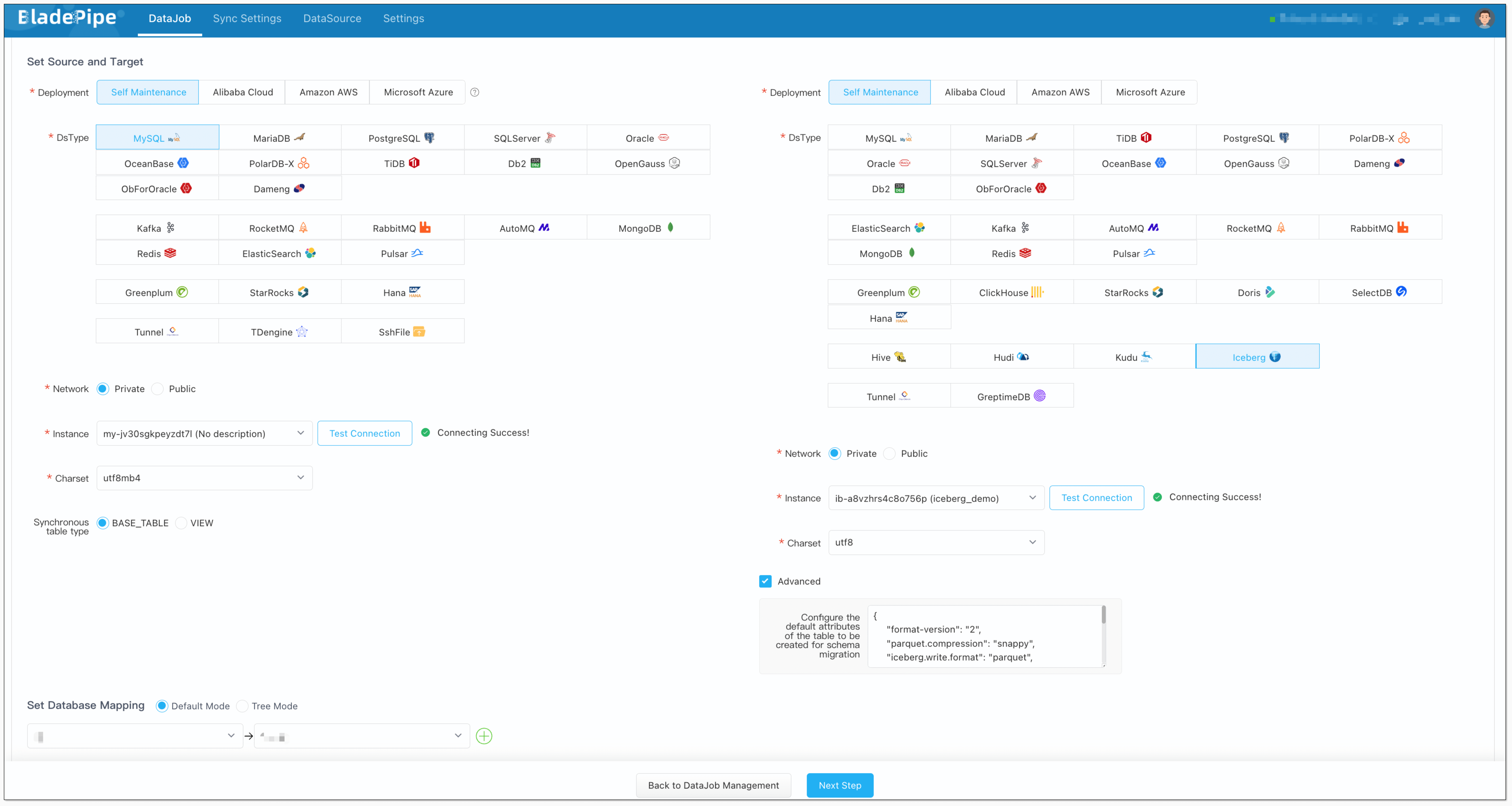

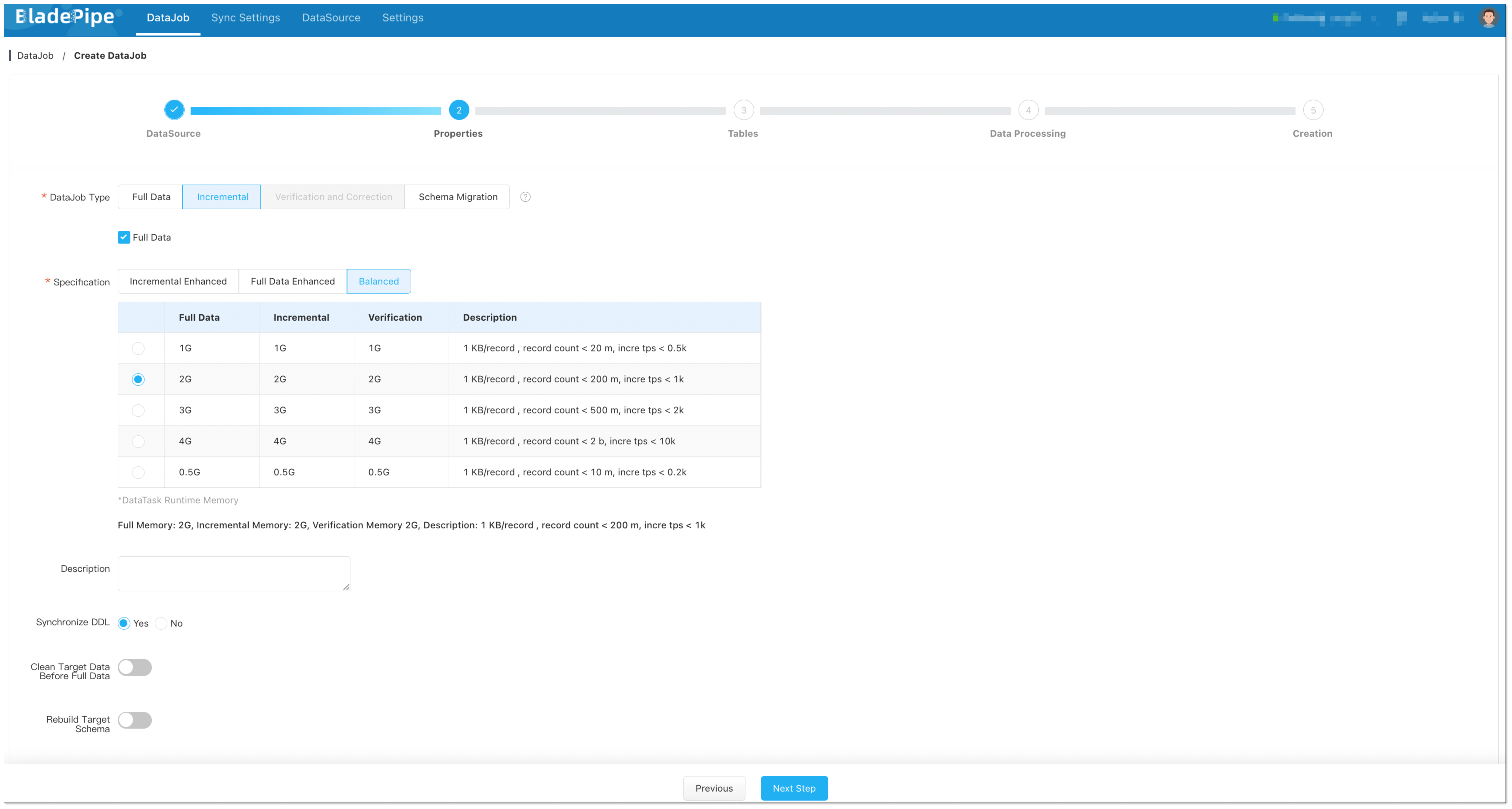

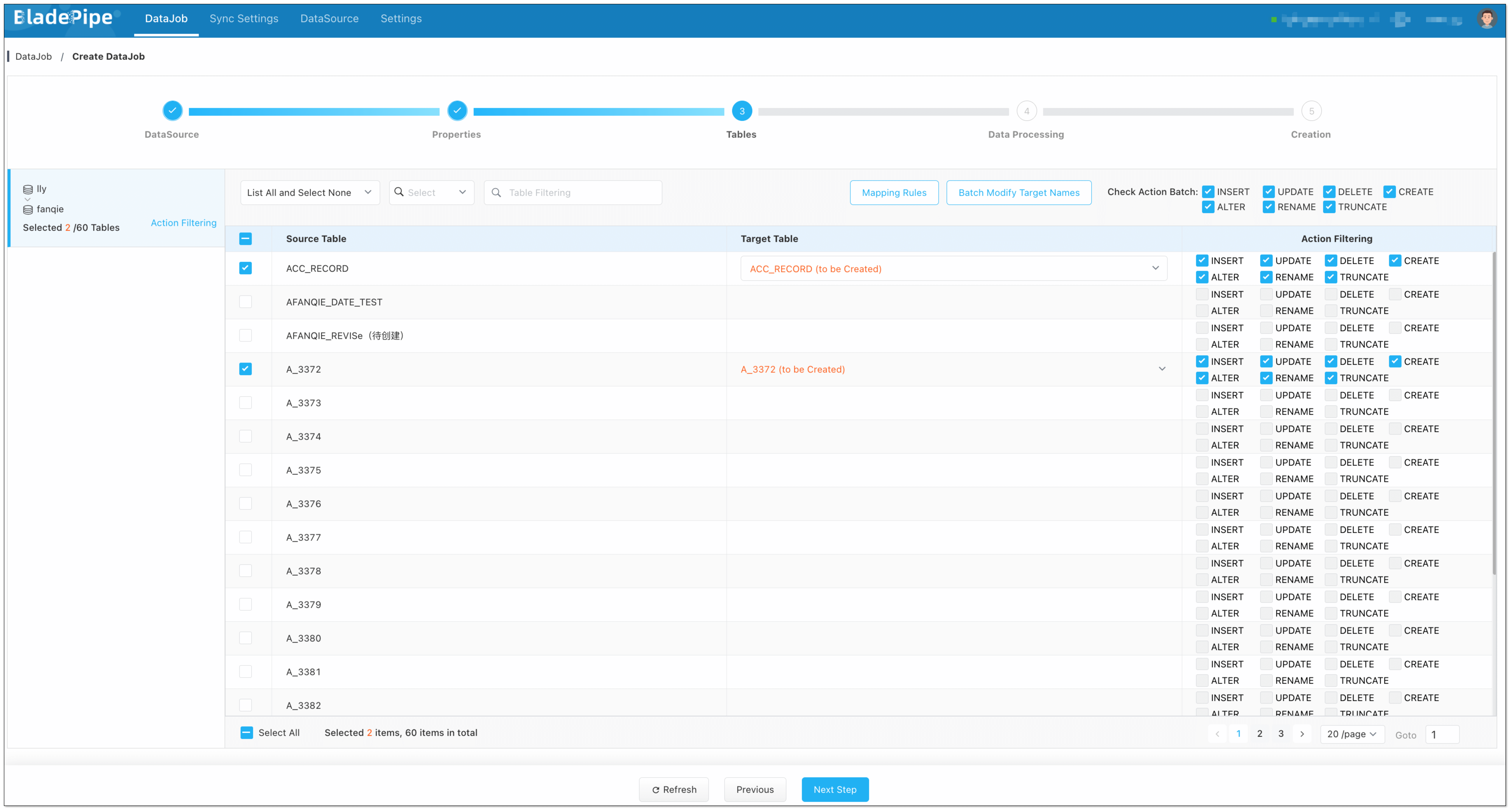

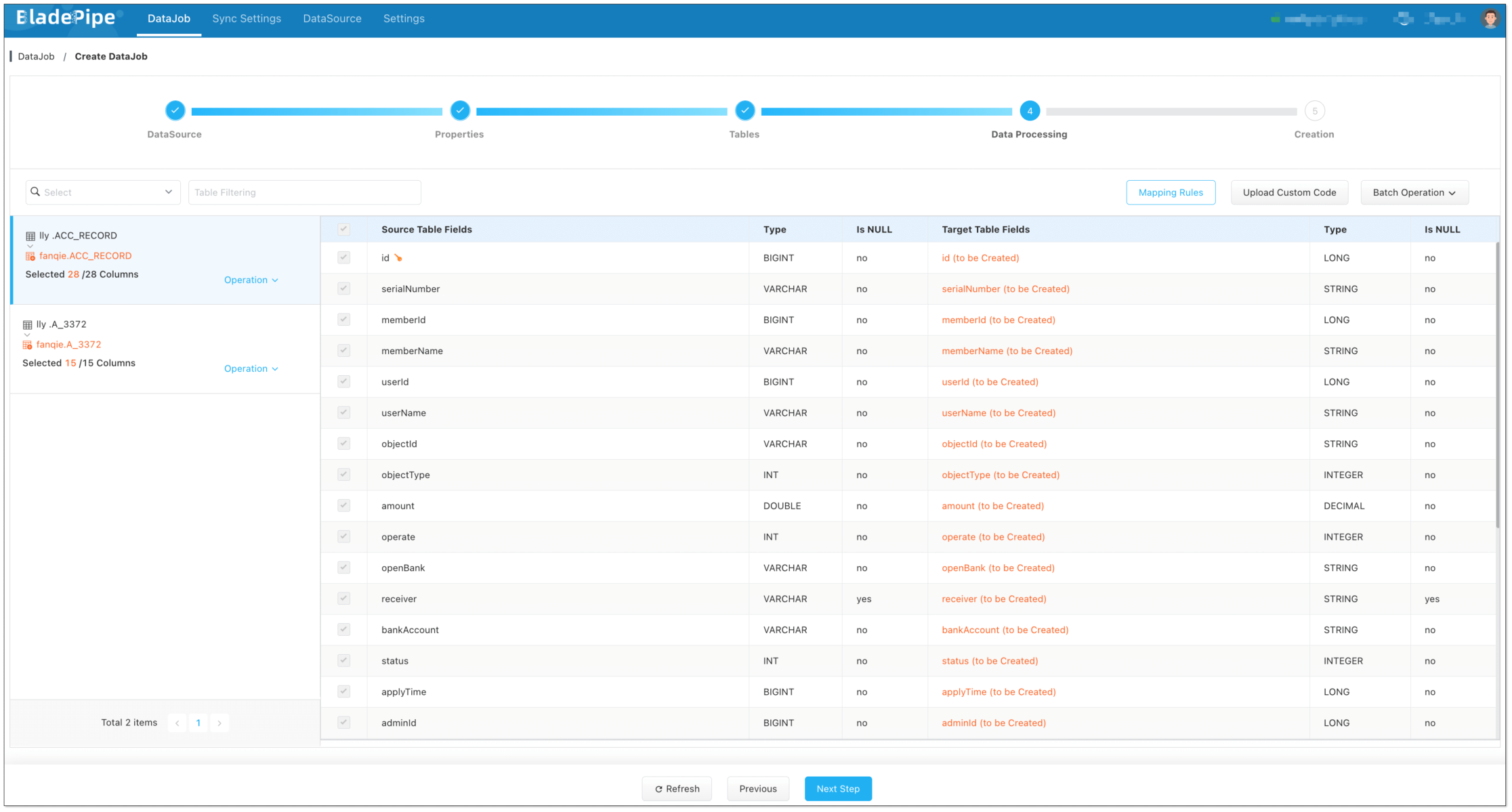

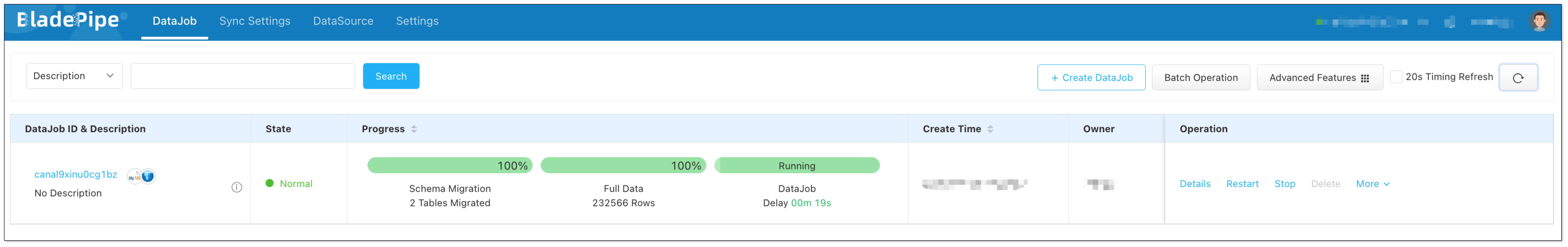

diff --git a/1.10.1/docs/bladepipe.md b/1.10.1/docs/bladepipe.md new file mode 100644 index 0000000..73831ad --- /dev/null +++ b/1.10.1/docs/bladepipe.md