| commit | b5e39bedab14a7fd800597ee0114b07448c1b0f9 | [log] [tgz] |

|---|---|---|

| author | Angerszhuuuu <angers.zhu@gmail.com> | Tue May 07 14:47:40 2024 +0800 |

| committer | Wenchen Fan <wenchen@databricks.com> | Tue May 07 14:47:40 2024 +0800 |

| tree | 820f2ce6212f15650799c77a26b3673f52f62bf7 | |

| parent | 56fe185c78a249cf88b1d7e5d1e67444e1b224db [diff] |

[SPARK-48027][SQL] InjectRuntimeFilter for multi-level join should check child join type

### What changes were proposed in this pull request?

In our prod we meet a case

```

with

refund_info as (

select

b_key,

1 as b_type

from default.table_b

),

next_month_time as (

select /*+ broadcast(b, c) */

c_key

,1 as c_time

FROM default.table_c

)

select

a.loan_id

,c.c_time

,b.type

from (

select

a_key

from default.table_a2

union

select

a_key

from default.table_a1

) a

left join refund_info b on a.loan_id = b.loan_id

left join next_month_time c on a.loan_id = c.loan_id

;

```

In this query, it inject table_b as table_c's runtime bloom filter, but table_b join condition is LEFT OUTER, causing table_c missing data.

Caused by

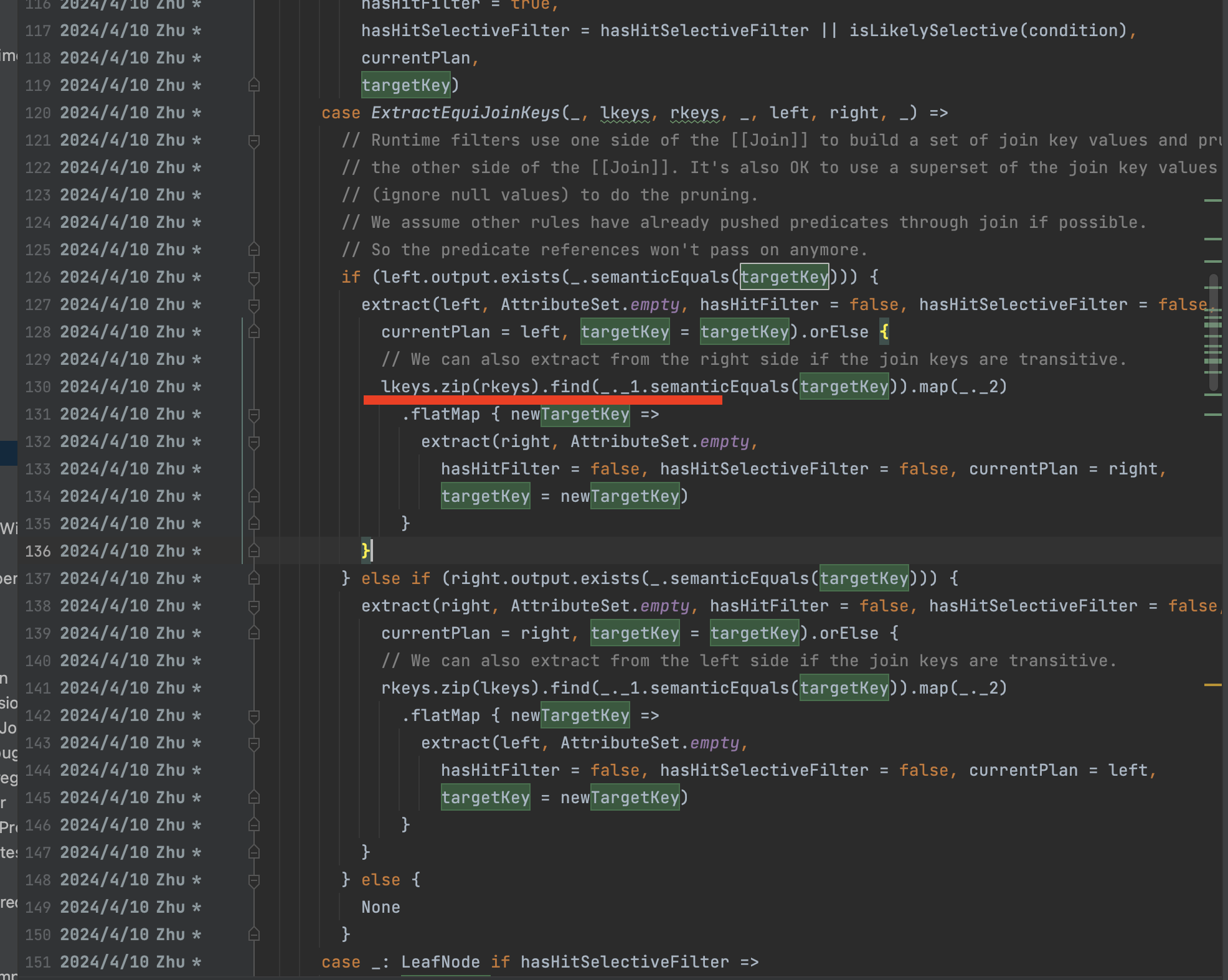

InjectRuntimeFilter.extractSelectiveFilterOverScan(), when handle join, since left plan (a left outer join b's a) is a UNION then the extract result is NONE, then zip left/right keys to extract from join's right, finnaly cause this issue.

### Why are the changes needed?

Fix data correctness issue

### Does this PR introduce _any_ user-facing change?

Yea, fix data incorrect issue

### How was this patch tested?

For the existed PR, it fix the wrong case

Before: It extract a LEFT_ANTI_JOIN's right child to the outside bf3....its not correct

```

Join Inner, (c3#45926 = c1#45914)

:- Join LeftAnti, (c1#45914 = c2#45920)

: :- Filter isnotnull(c1#45914)

: : +- Relation default.bf1[a1#45912,b1#45913,c1#45914,d1#45915,e1#45916,f1#45917] parquet

: +- Project [c2#45920]

: +- Filter ((isnotnull(a2#45918) AND (a2#45918 = 5)) AND isnotnull(c2#45920))

: +- Relation default.bf2[a2#45918,b2#45919,c2#45920,d2#45921,e2#45922,f2#45923] parquet

+- Filter (isnotnull(c3#45926) AND might_contain(scalar-subquery#48719 [], xxhash64(c3#45926, 42)))

: +- Aggregate [bloom_filter_agg(xxhash64(c2#45920, 42), 1000000, 8388608, 0, 0) AS bloomFilter#48718]

: +- Project [c2#45920]

: +- Filter ((isnotnull(a2#45918) AND (a2#45918 = 5)) AND isnotnull(c2#45920))

: +- Relation default.bf2[a2#45918,b2#45919,c2#45920,d2#45921,e2#45922,f2#45923] parquet

+- Relation default.bf3[a3#45924,b3#45925,c3#45926,d3#45927,e3#45928,f3#45929] parquet

```

After:

```

Join Inner, (c3#45926 = c1#45914)

:- Join LeftAnti, (c1#45914 = c2#45920)

: :- Filter isnotnull(c1#45914)

: : +- Relation default.bf1[a1#45912,b1#45913,c1#45914,d1#45915,e1#45916,f1#45917] parquet

: +- Project [c2#45920]

: +- Filter ((isnotnull(a2#45918) AND (a2#45918 = 5)) AND isnotnull(c2#45920))

: +- Relation default.bf2[a2#45918,b2#45919,c2#45920,d2#45921,e2#45922,f2#45923] parquet

+- Filter (isnotnull(c3#45926))

+- Relation default.bf3[a3#45924,b3#45925,c3#45926,d3#45927,e3#45928,f3#45929] parquet

```

### Was this patch authored or co-authored using generative AI tooling?

NO

Closes #46263 from AngersZhuuuu/SPARK-48027.

Lead-authored-by: Angerszhuuuu <angers.zhu@gmail.com>

Co-authored-by: Wenchen Fan <cloud0fan@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

Spark is a unified analytics engine for large-scale data processing. It provides high-level APIs in Scala, Java, Python, and R, and an optimized engine that supports general computation graphs for data analysis. It also supports a rich set of higher-level tools including Spark SQL for SQL and DataFrames, pandas API on Spark for pandas workloads, MLlib for machine learning, GraphX for graph processing, and Structured Streaming for stream processing.

You can find the latest Spark documentation, including a programming guide, on the project web page. This README file only contains basic setup instructions.

Spark is built using Apache Maven. To build Spark and its example programs, run:

./build/mvn -DskipTests clean package

(You do not need to do this if you downloaded a pre-built package.)

More detailed documentation is available from the project site, at “Building Spark”.

For general development tips, including info on developing Spark using an IDE, see “Useful Developer Tools”.

The easiest way to start using Spark is through the Scala shell:

./bin/spark-shell

Try the following command, which should return 1,000,000,000:

scala> spark.range(1000 * 1000 * 1000).count()

Alternatively, if you prefer Python, you can use the Python shell:

./bin/pyspark

And run the following command, which should also return 1,000,000,000:

>>> spark.range(1000 * 1000 * 1000).count()

Spark also comes with several sample programs in the examples directory. To run one of them, use ./bin/run-example <class> [params]. For example:

./bin/run-example SparkPi

will run the Pi example locally.

You can set the MASTER environment variable when running examples to submit examples to a cluster. This can be spark:// URL, “yarn” to run on YARN, and “local” to run locally with one thread, or “local[N]” to run locally with N threads. You can also use an abbreviated class name if the class is in the examples package. For instance:

MASTER=spark://host:7077 ./bin/run-example SparkPi

Many of the example programs print usage help if no params are given.

Testing first requires building Spark. Once Spark is built, tests can be run using:

./dev/run-tests

Please see the guidance on how to run tests for a module, or individual tests.

There is also a Kubernetes integration test, see resource-managers/kubernetes/integration-tests/README.md

Spark uses the Hadoop core library to talk to HDFS and other Hadoop-supported storage systems. Because the protocols have changed in different versions of Hadoop, you must build Spark against the same version that your cluster runs.

Please refer to the build documentation at “Specifying the Hadoop Version and Enabling YARN” for detailed guidance on building for a particular distribution of Hadoop, including building for particular Hive and Hive Thriftserver distributions.

Please refer to the Configuration Guide in the online documentation for an overview on how to configure Spark.

Please review the Contribution to Spark guide for information on how to get started contributing to the project.