| commit | e494cee9b540eb19cc0220cc3b3dbfde074b55c9 | [log] [tgz] |

|---|---|---|

| author | Thom Lane <thom.e.lane@gmail.com> | Tue Jun 26 09:54:06 2018 -0700 |

| committer | Indu Bharathi <indhubharathi@gmail.com> | Tue Jun 26 09:54:06 2018 -0700 |

| tree | f76b46c0566262441e545de178debdeed5453bab | |

| parent | ed7e3602a8046646582c0c681b70d9556f5fa0a4 [diff] |

[MXNET-593] Adding 2 tutorials on Learning Rate Schedules (#11296) * Added two tutorials on learning rate schedules; basic and advanced. * Correcting notebook skip line. * Corrected cosine graph * Changes based on @KellenSunderland feedback.

| Master | Docs | License |

|---|---|---|

|

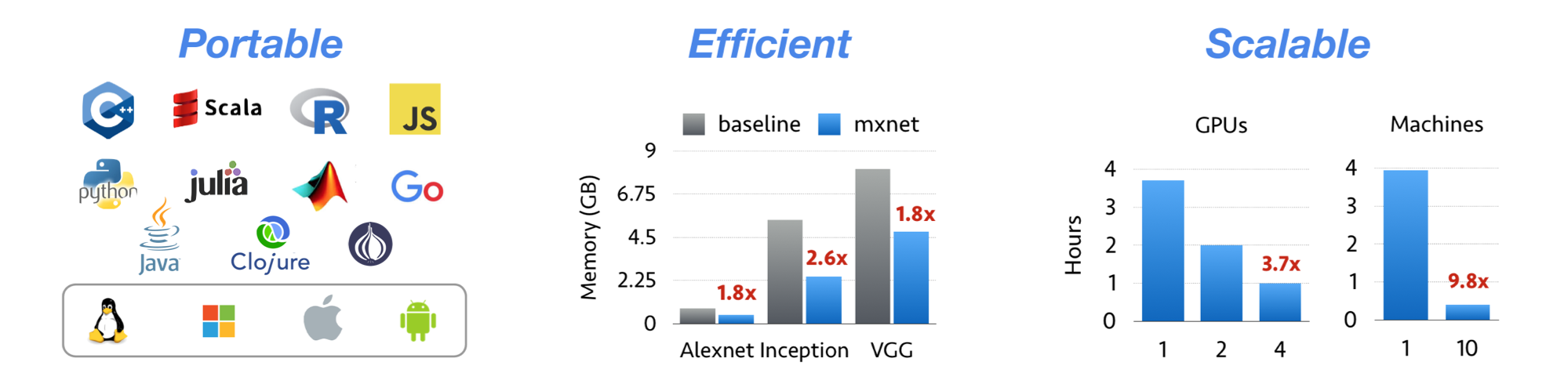

Apache MXNet (incubating) is a deep learning framework designed for both efficiency and flexibility. It allows you to mix symbolic and imperative programming to maximize efficiency and productivity. At its core, MXNet contains a dynamic dependency scheduler that automatically parallelizes both symbolic and imperative operations on the fly. A graph optimization layer on top of that makes symbolic execution fast and memory efficient. MXNet is portable and lightweight, scaling effectively to multiple GPUs and multiple machines.

MXNet is also more than a deep learning project. It is also a collection of blue prints and guidelines for building deep learning systems, and interesting insights of DL systems for hackers.

Licensed under an Apache-2.0 license.

Tianqi Chen, Mu Li, Yutian Li, Min Lin, Naiyan Wang, Minjie Wang, Tianjun Xiao, Bing Xu, Chiyuan Zhang, and Zheng Zhang. MXNet: A Flexible and Efficient Machine Learning Library for Heterogeneous Distributed Systems. In Neural Information Processing Systems, Workshop on Machine Learning Systems, 2015

MXNet emerged from a collaboration by the authors of cxxnet, minerva, and purine2. The project reflects what we have learned from the past projects. MXNet combines aspects of each of these projects to achieve flexibility, speed, and memory efficiency.