| Category | Badges |

|---|---|

| License |  |

| PyPI |   |

| Containers |    |

| Community |    |

| Dev tools |  |

| Version | Build Status |

|---|---|

| Main | |

| 3.x | |

| 2.x |

Apache Airflow (or simply Airflow) is a platform to programmatically author, schedule, and monitor workflows.

When workflows are defined as code, they become more maintainable, versionable, testable, and collaborative.

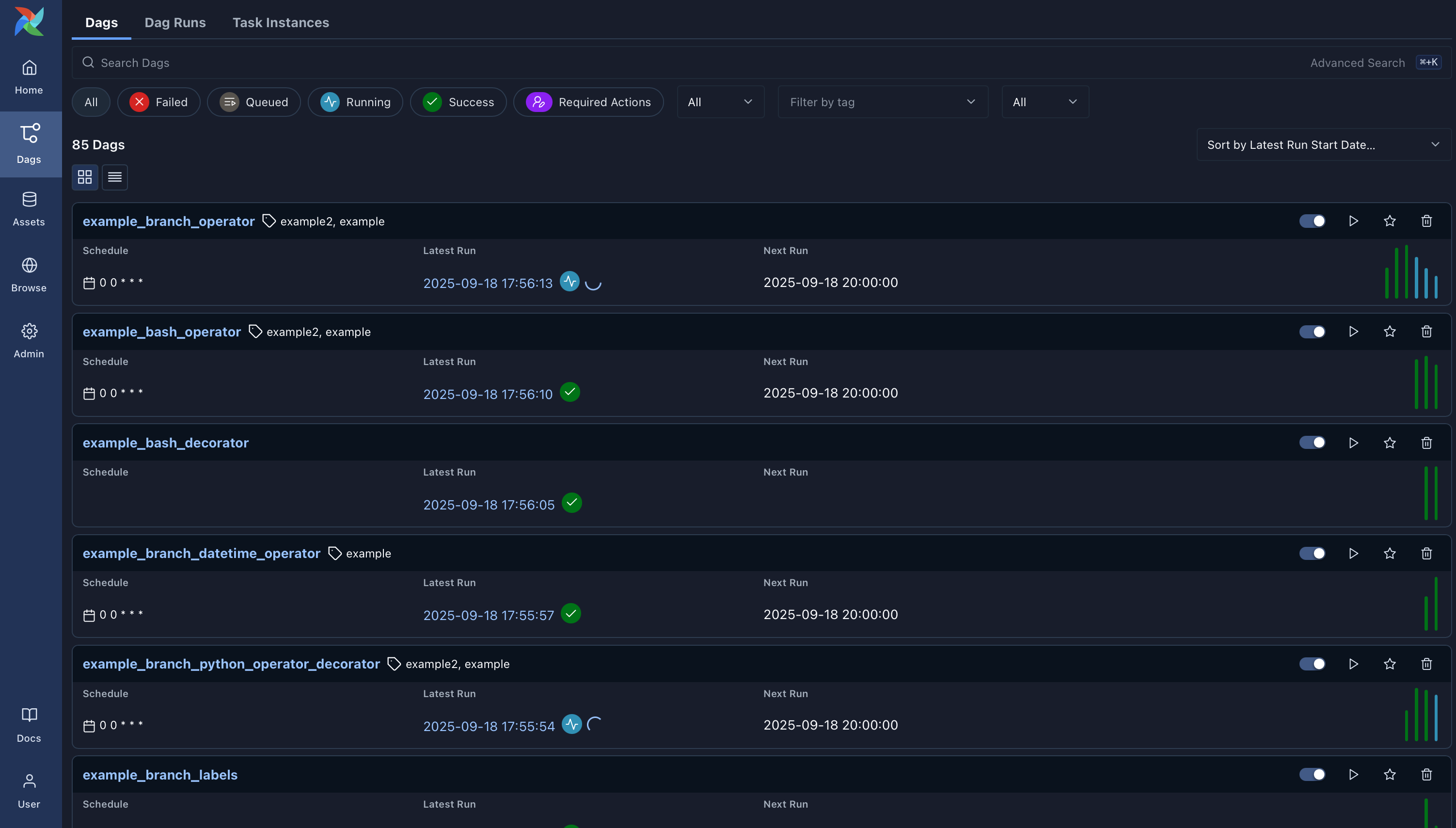

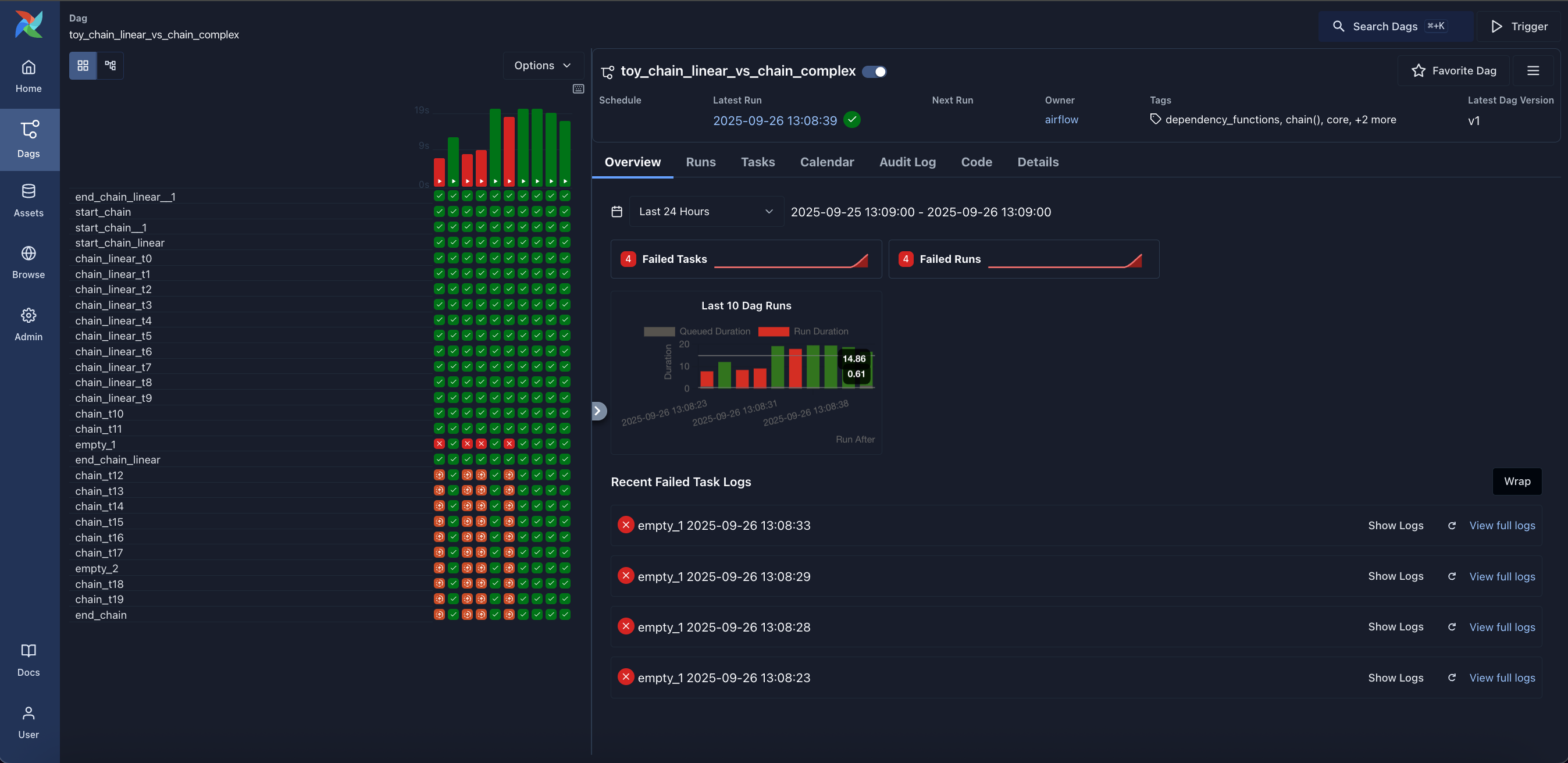

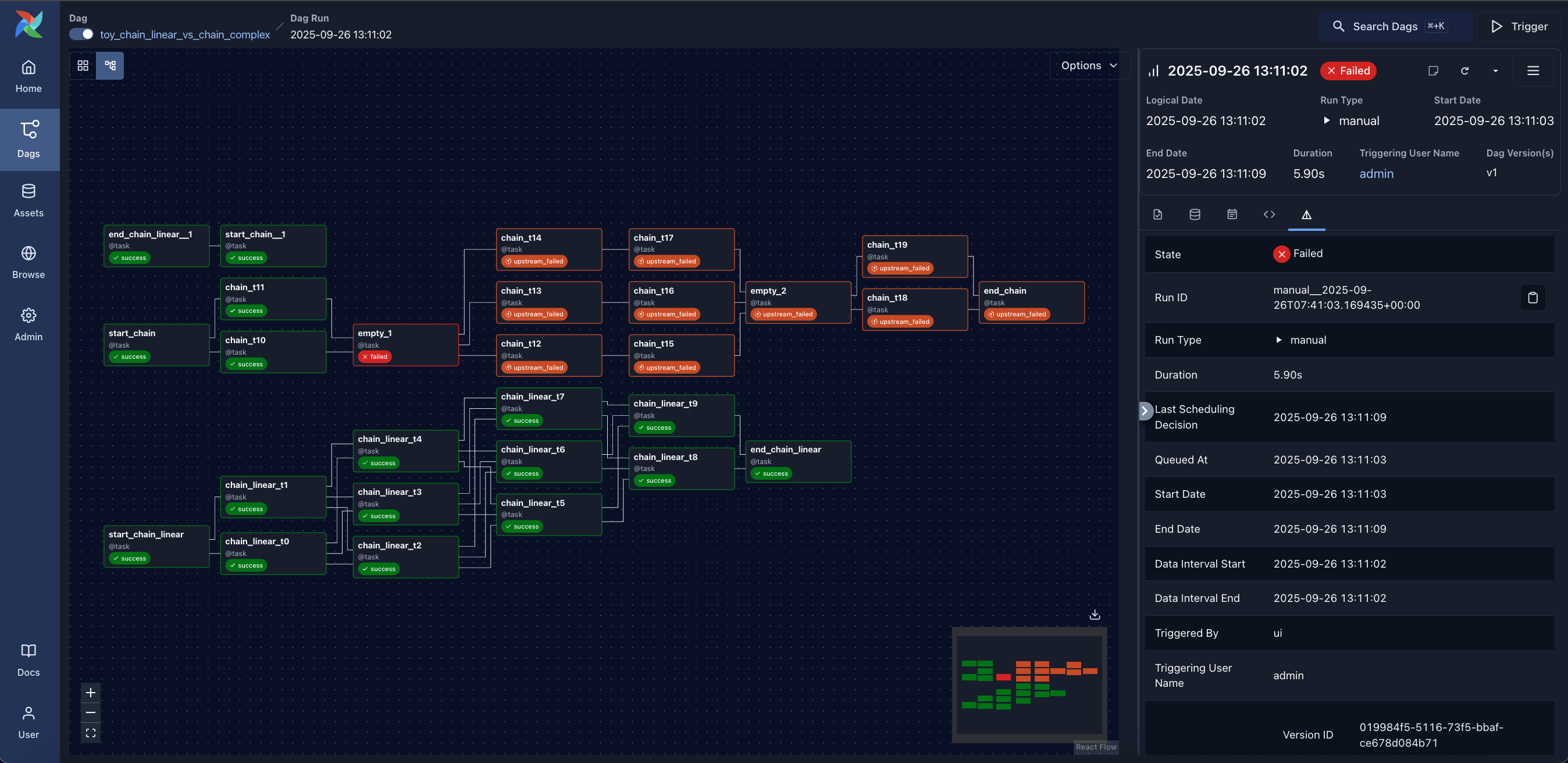

Use Airflow to author workflows (Dags) that orchestrate tasks. The Airflow scheduler executes your tasks on an array of workers while following the specified dependencies. Rich command line utilities make performing complex surgeries on Dags a snap. The rich user interface makes it easy to visualize pipelines running in production, monitor progress, and troubleshoot issues when needed.

Table of contents

Airflow works best with workflows that are mostly static and slowly changing. When the Dag structure is similar from one run to the next, it clarifies the unit of work and continuity. Other similar projects include Luigi, Oozie and Azkaban.

Airflow is commonly used to process data, but has the opinion that tasks should ideally be idempotent (i.e., results of the task will be the same, and will not create duplicated data in a destination system), and should not pass large quantities of data from one task to the next (though tasks can pass metadata using Airflow's XCom feature). For high-volume, data-intensive tasks, a best practice is to delegate to external services specializing in that type of work.

Airflow is not a streaming solution, but it is often used to process real-time data, pulling data off streams in batches.

Apache Airflow is tested with:

| Main version (dev) | Stable version (3.2.0) | Stable version (2.11.2) | |

|---|---|---|---|

| Python | 3.10, 3.11, 3.12, 3.13, 3.14 | 3.10, 3.11, 3.12, 3.13, 3.14 | 3.10, 3.11, 3.12 |

| Platform | AMD64/ARM64 | AMD64/ARM64 | AMD64/ARM64(*) |

| Kubernetes | 1.30, 1.31, 1.32, 1.33, 1.34, 1.35 | 1.30, 1.31, 1.32, 1.33, 1.34, 1.35 | 1.26, 1.27, 1.28, 1.29, 1.30 |

| PostgreSQL | 14, 15, 16, 17, 18 | 14, 15, 16, 17, 18 | 12, 13, 14, 15, 16 |

| MySQL | 8.0, 8.4, Innovation | 8.0, 8.4, Innovation | 8.0, Innovation |

| SQLite | 3.15.0+ | 3.15.0+ | 3.15.0+ |

* Experimental

Note: MariaDB is not tested/recommended.

Note: SQLite is used in Airflow tests. Do not use it in production. We recommend using the latest stable version of SQLite for local development.

Note: Airflow currently can be run on POSIX-compliant Operating Systems. For development, it is regularly tested on fairly modern Linux Distros and recent versions of macOS. On Windows you can run it via WSL2 (Windows Subsystem for Linux 2) or via Linux Containers. The work to add Windows support is tracked via #10388, but it is not a high priority. You should only use Linux-based distros as “Production” execution environment as this is the only environment that is supported. The only distro that is used in our CI tests and that is used in the Community managed DockerHub image is Debian Bookworm.

Visit the official Airflow website documentation (latest stable release) for help with installing Airflow, getting started, or walking through a more complete tutorial.

Note: If you're looking for documentation for the main branch (latest development branch): you can find it on s.apache.org/airflow-docs.

For more information on Airflow Improvement Proposals (AIPs), visit the Airflow Wiki.

Documentation for dependent projects like provider distributions, Docker image, Helm Chart, you'll find it in the documentation index.

We publish Apache Airflow as apache-airflow package in PyPI. Installing it however might be sometimes tricky because Airflow is a bit of both a library and application. Libraries usually keep their dependencies open, and applications usually pin them, but we should do neither and both simultaneously. We decided to keep our dependencies as open as possible (in pyproject.toml) so users can install different versions of libraries if needed. This means that pip install apache-airflow will not work from time to time or will produce unusable Airflow installation.

To have repeatable installation, however, we keep a set of “known-to-be-working” constraint files in the orphan constraints-main and constraints-2-0 branches. We keep those “known-to-be-working” constraints files separately per major/minor Python version. You can use them as constraint files when installing Airflow from PyPI. Note that you have to specify correct Airflow tag/version/branch and Python versions in the URL.

Note: Only

pipinstallation is currently officially supported.

While it is possible to install Airflow with tools like Poetry or pip-tools, they do not share the same workflow as pip - especially when it comes to constraint vs. requirements management. Installing via Poetry or pip-tools is not currently supported.

If you wish to install Airflow using those tools, you should use the constraint files and convert them to the appropriate format and workflow that your tool requires.

pip install 'apache-airflow==3.2.0' \ --constraint "https://raw.githubusercontent.com/apache/airflow/constraints-3.2.0/constraints-3.10.txt"

pip install 'apache-airflow[postgres,google]==3.2.0' \ --constraint "https://raw.githubusercontent.com/apache/airflow/constraints-3.2.0/constraints-3.10.txt"

For information on installing provider distributions, check providers.

For comprehensive instructions on setting up your local development environment and installing Apache Airflow, please refer to the INSTALLING.md file.

Apache Airflow is an Apache Software Foundation (ASF) project, and our official source code releases:

Following the ASF rules, the source packages released must be sufficient for a user to build and test the release provided they have access to the appropriate platform and tools.

There are other ways of installing and using Airflow. Those are “convenience” methods - they are not “official releases” as stated by the ASF Release Policy, but they can be used by the users who do not want to build the software themselves.

Those are - in the order of most common ways people install Airflow:

pip tooldocker tool, use them in Kubernetes, Helm Charts, docker-compose, docker swarm, etc. You can read more about using, customizing, and extending the images in the Latest docs, and learn details on the internals in the images document.All those artifacts are not official releases, but they are prepared using officially released sources. Some of those artifacts are “development” or “pre-release” ones, and they are clearly marked as such following the ASF Policy.

Dags: Overview of all Dags in your environment.

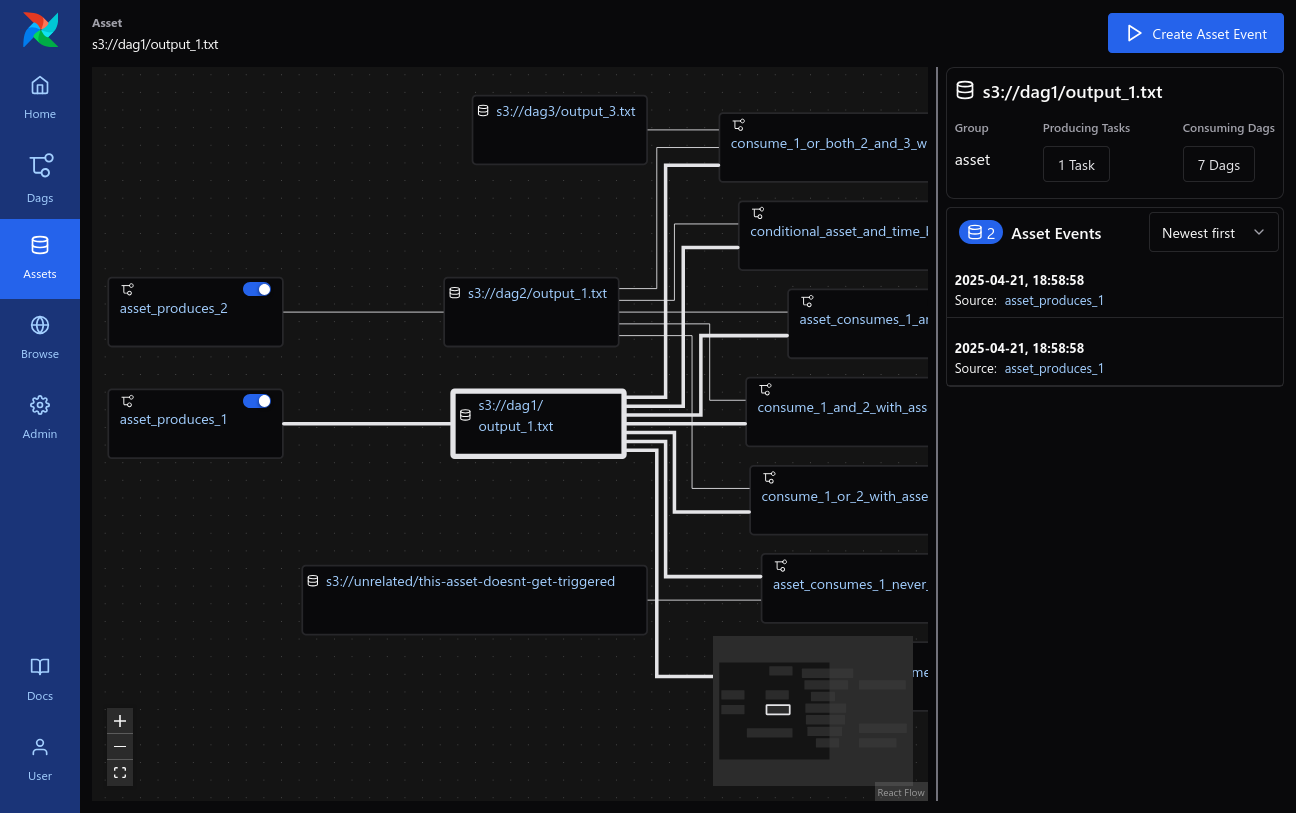

Assets: Overview of Assets with dependencies.

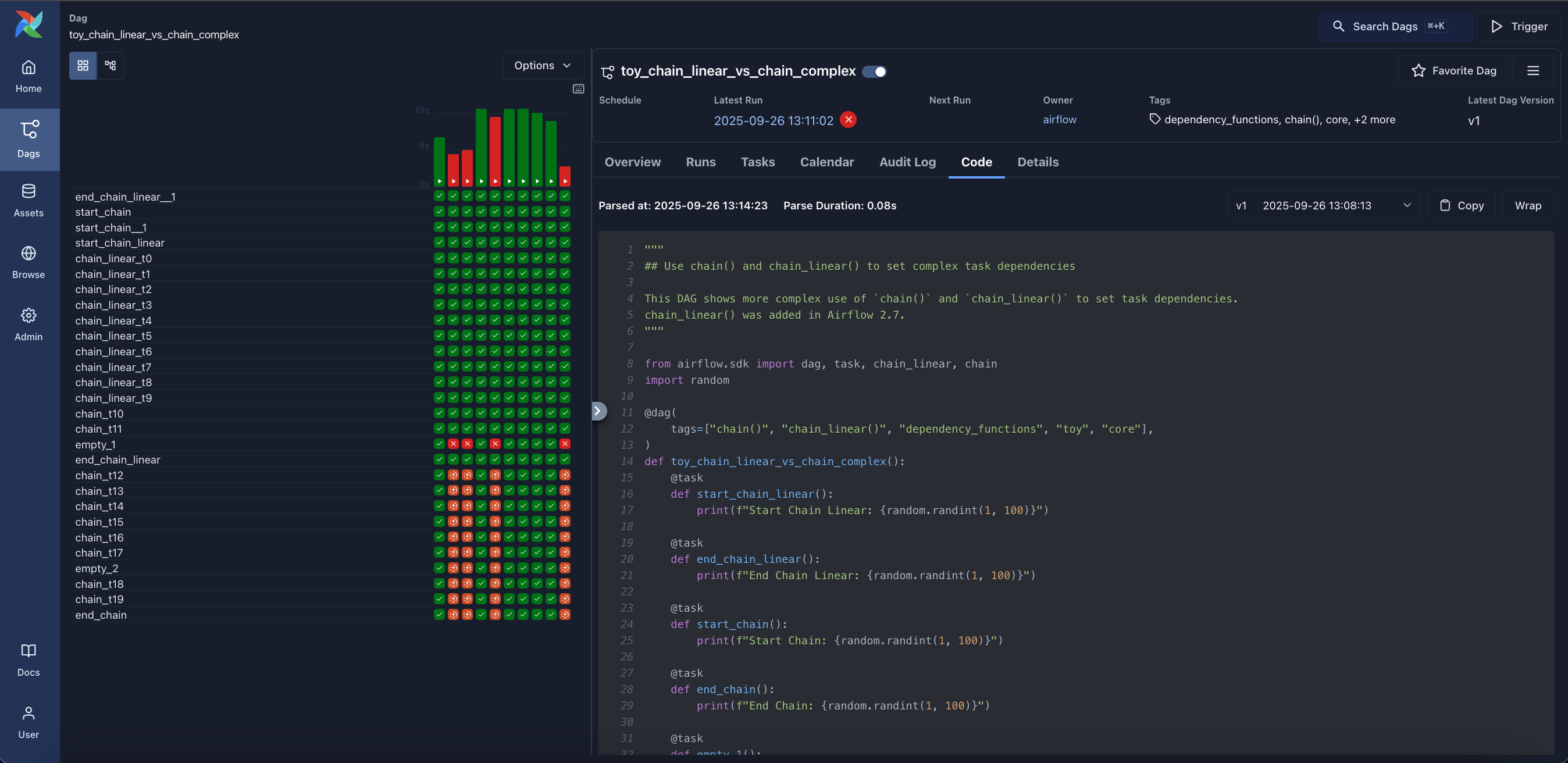

Grid: Grid representation of a Dag that spans across time.

Graph: Visualization of a Dag's dependencies and their current status for a specific run.

Home: Summary statistics of your Airflow environment.

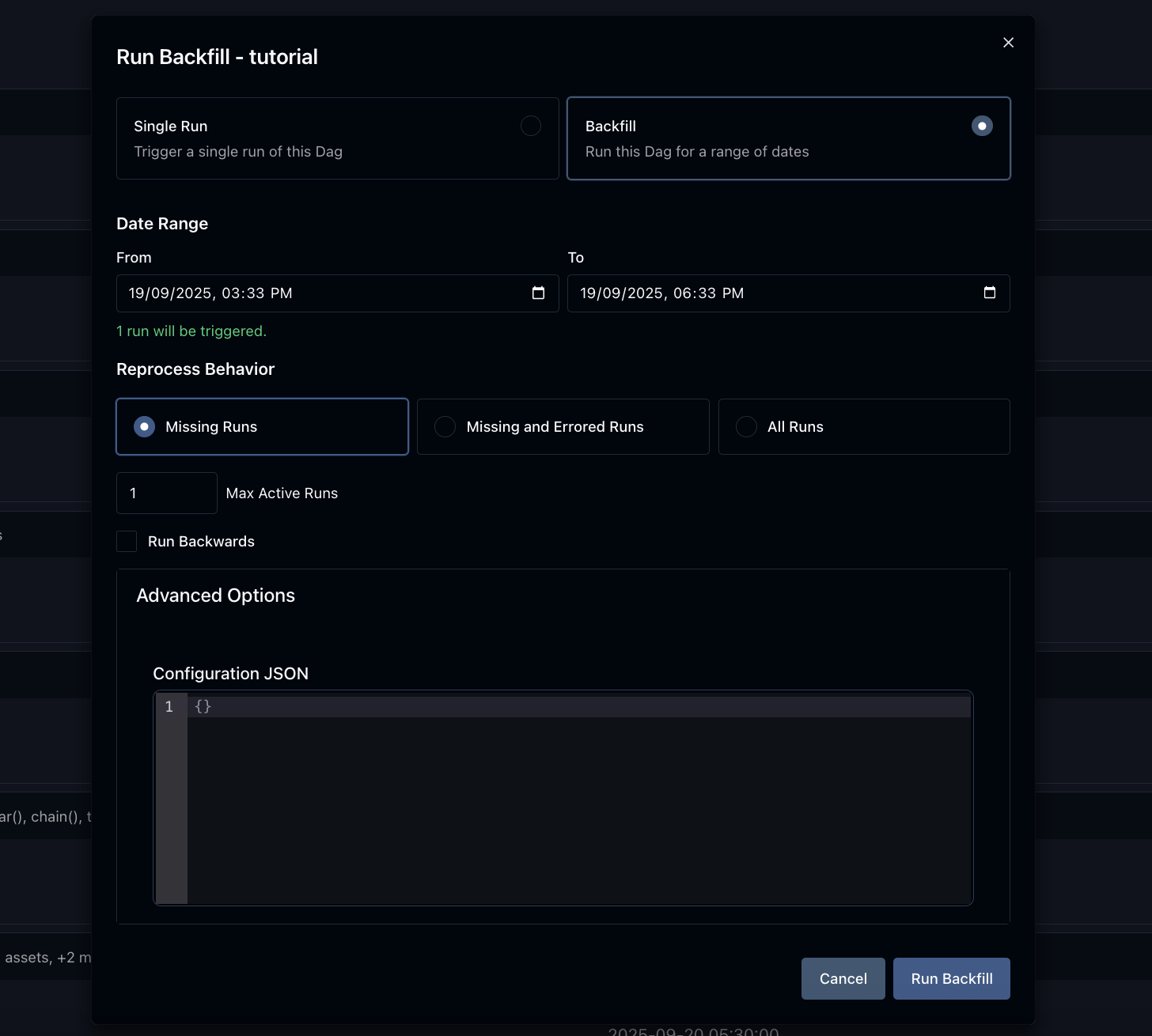

Backfill: Backfilling a Dag for a specific date range.

Code: Quick way to view source code of a Dag.

As of Airflow 2.0.0, we support a strict SemVer approach for all packages released.

There are few specific rules that we agreed to that define details of versioning of the different packages:

google 4.1.0 and amazon 3.1.1 providers can happily be installed with Airflow 2.1.2. If there are limits of cross-dependencies between providers and Airflow packages, they are present in providers as install_requires limitations. We aim to keep backwards compatibility of providers with all previously released Airflow 2 versions but there will sometimes be breaking changes that might make some, or all providers, have minimum Airflow version specified.Apache Airflow version life cycle:

| Version | Current Patch/Minor | State | First Release | Limited Maintenance | EOL/Terminated |

|---|---|---|---|---|---|

| 3 | 3.2.1 | Maintenance | Apr 22, 2025 | TBD | TBD |

| 2 | 2.11.2 | Limited maintenance | Dec 17, 2020 | Oct 22, 2025 | Apr 22, 2026 |

| 1.10 | 1.10.15 | EOL | Aug 27, 2018 | Dec 17, 2020 | June 17, 2021 |

| 1.9 | 1.9.0 | EOL | Jan 03, 2018 | Aug 27, 2018 | Aug 27, 2018 |

| 1.8 | 1.8.2 | EOL | Mar 19, 2017 | Jan 03, 2018 | Jan 03, 2018 |

| 1.7 | 1.7.1.2 | EOL | Mar 28, 2016 | Mar 19, 2017 | Mar 19, 2017 |

Limited support versions will be supported with security and critical bug fix only. EOL versions will not get any fixes nor support. We always recommend that all users run the latest available minor release for whatever major version is in use. We highly recommend upgrading to the latest Airflow major release at the earliest convenient time and before the EOL date.

As of Airflow 2.0, we agreed to certain rules we follow for Python and Kubernetes support. They are based on the official release schedule of Python and Kubernetes, nicely summarized in the Python Developer's Guide and Kubernetes version skew policy.

We drop support for Python and Kubernetes versions when they reach EOL. Except for Kubernetes, a version stays supported by Airflow if two major cloud providers still provide support for it. We drop support for those EOL versions in main right after EOL date, and it is effectively removed when we release the first new MINOR (Or MAJOR if there is no new MINOR version) of Airflow. For example, for Python 3.10 it means that we will drop support in main right after 27.06.2023, and the first MAJOR or MINOR version of Airflow released after will not have it.

We support a new version of Python/Kubernetes in main after they are officially released, as soon as we make them work in our CI pipeline (which might not be immediate due to dependencies catching up with new versions of Python mostly) we release new images/support in Airflow based on the working CI setup.

This policy is best-effort which means there may be situations where we might terminate support earlier if circumstances require it.

The Airflow Community provides conveniently packaged container images that are published whenever we publish an Apache Airflow release. Those images contain:

The version of the base OS image is the stable version of Debian. Airflow supports using all currently active stable versions - as soon as all Airflow dependencies support building, and we set up the CI pipeline for building and testing the OS version. Approximately 6 months before the end-of-regular support of a previous stable version of the OS, Airflow switches the images released to use the latest supported version of the OS.

For example switch from Debian Bullseye to Debian Bookworm has been implemented before 2.8.0 release in October 2023 and Debian Bookworm will be the only option supported as of Airflow 2.10.0.

Users will continue to be able to build their images using stable Debian releases until the end of regular support and building and verifying of the images happens in our CI but no unit tests were executed using this image in the main branch.

Airflow has a lot of dependencies - direct and transitive, also Airflow is both - library and application, therefore our policies to dependencies has to include both - stability of installation of application, but also ability to install newer version of dependencies for those users who develop Dags. We developed the approach where constraints are used to make sure airflow can be installed in a repeatable way, while we do not limit our users to upgrade most of the dependencies. As a result we decided not to upper-bound version of Airflow dependencies by default, unless we have good reasons to believe upper-bounding them is needed because of importance of the dependency as well as risk it involves to upgrade specific dependency. We also upper-bound the dependencies that we know cause problems.

The constraint mechanism of ours takes care about finding and upgrading all the non-upper bound dependencies automatically (providing that all the tests pass). Our main build failures will indicate in case there are versions of dependencies that break our tests - indicating that we should either upper-bind them or that we should fix our code/tests to account for the upstream changes from those dependencies.

Whenever we upper-bound such a dependency, we should always comment why we are doing it - i.e. we should have a good reason why dependency is upper-bound. And we should also mention what is the condition to remove the binding.

Those dependencies are maintained in pyproject.toml.

There are few dependencies that we decided are important enough to upper-bound them by default, as they are known to follow predictable versioning scheme, and we know that new versions of those are very likely to bring breaking changes. We commit to regularly review and attempt to upgrade to the newer versions of the dependencies as they are released, but this is manual process.

The important dependencies are:

SQLAlchemy: upper-bound to specific MINOR version (SQLAlchemy is known to remove deprecations and introduce breaking changes especially that support for different Databases varies and changes at various speed)Alembic: it is important to handle our migrations in predictable and performant way. It is developed together with SQLAlchemy. Our experience with Alembic is that it very stable in MINOR versionFlask: We are using Flask as the back-bone of our web UI and API. We know major version of Flask are very likely to introduce breaking changes across those so limiting it to MAJOR version makes sensewerkzeug: the library is known to cause problems in new versions. It is tightly coupled with Flask libraries, and we should update them togethercelery: Celery is a crucial component of Airflow as it used for CeleryExecutor (and similar). Celery follows SemVer, so we should upper-bound it to the next MAJOR version. Also, when we bump the upper version of the library, we should make sure Celery Provider minimum Airflow version is updated.kubernetes: Kubernetes is a crucial component of Airflow as it is used for the KubernetesExecutor (and similar). Kubernetes Python library follows SemVer, so we should upper-bound it to the next MAJOR version. Also, when we bump the upper version of the library, we should make sure Kubernetes Provider minimum Airflow version is updated.The main part of the Airflow is the Airflow Core, but the power of Airflow also comes from a number of providers that extend the core functionality and are released separately, even if we keep them (for now) in the same monorepo for convenience. You can read more about the providers in the Providers documentation. We also have set of policies implemented for maintaining and releasing community-managed providers as well as the approach for community vs. 3rd party providers in the providers document.

Those extras and providers dependencies are maintained in provider.yaml of each provider.

By default, we should not upper-bound dependencies for providers, however each provider's maintainer might decide to add additional limits (and justify them with comment).

Want to help build Apache Airflow? Check out our contributors' guide for a comprehensive overview of how to contribute, including setup instructions, coding standards, and pull request guidelines.

If you can't wait to contribute, and want to get started asap, check out the contribution quickstart here!

Official Docker (container) images for Apache Airflow are described in images.

+1s are considered a binding vote.We know about around 500 organizations that are using Apache Airflow (but there are likely many more) in the wild.

If you use Airflow - feel free to make a PR to add your organisation to the list.

Airflow is the work of the community, but the core committers/maintainers are responsible for reviewing and merging PRs as well as steering conversations around new feature requests. If you would like to become a maintainer, please review the Apache Airflow committer requirements.

Often you will see an issue that is assigned to specific milestone with Airflow version, or a PR that gets merged to the main branch and you might wonder which release the merged PR(s) will be released in or which release the fixed issues will be in. The answer to this is as usual - it depends on various scenarios. The answer is different for PRs and Issues.

To add a bit of context, we are following the Semver versioning scheme as described in Airflow release process. More details are explained in detail in this README under the Semantic versioning chapter, but in short, we have MAJOR.MINOR.PATCH versions of Airflow.

MAJOR version is incremented in case of breaking changesMINOR version is incremented when there are new features addedPATCH version is incremented when there are only bug-fixes and doc-only changesGenerally we release MINOR versions of Airflow from a branch that is named after the MINOR version. For example 2.7.* releases are released from v2-7-stable branch, 2.8.* releases are released from v2-8-stable branch, etc.

Most of the time in our release cycle, when the branch for next MINOR branch is not yet created, all PRs merged to main (unless they get reverted), will find their way to the next MINOR release. For example if the last release is 2.7.3 and v2-8-stable branch is not created yet, the next MINOR release is 2.8.0 and all PRs merged to main will be released in 2.8.0. However, some PRs (bug-fixes and doc-only changes) when merged, can be cherry-picked to current MINOR branch and released in the next PATCHLEVEL release. For example, if 2.8.1 is already released and we are working on 2.9.0dev, then marking a PR with 2.8.2 milestone means that it will be cherry-picked to v2-8-test branch and released in 2.8.2rc1, and eventually in 2.8.2.

When we prepare for the next MINOR release, we cut new v2-*-test and v2-*-stable branch and prepare alpha, beta releases for the next MINOR version, the PRs merged to main will still be released in the next MINOR release until rc version is cut. This is happening because the v2-*-test and v2-*-stable branches are rebased on top of main when next beta and rc releases are prepared. For example, when we cut 2.10.0beta1 version, anything merged to main before 2.10.0rc1 is released, will find its way to 2.10.0rc1.

Then, once we prepare the first RC candidate for the MINOR release, we stop moving the v2-*-test and v2-*-stable branches and the PRs merged to main will be released in the next MINOR release. However, some PRs (bug-fixes and doc-only changes) when merged, can be cherry-picked to current MINOR branch and released in the next PATCHLEVEL release - for example when the last released version from v2-10-stable branch is 2.10.0rc1, some of the PRs from main can be marked as 2.10.0 milestone by committers, the release manager will try to cherry-pick them into the release branch. If successful, they will be released in 2.10.0rc2 and subsequently in 2.10.0. This also applies to subsequent PATCHLEVEL versions. When for example 2.10.1 is already released, marking a PR with 2.10.2 milestone will mean that it will be cherry-picked to v2-10-stable branch and released in 2.10.2rc1 and eventually in 2.10.2.

The final decision about cherry-picking is made by the release manager.

Marking issues with a milestone is a bit different. Maintainers do not mark issues with a milestone usually, normally they are only marked in PRs. If PR linked to the issue (and “fixing it”) gets merged and released in a specific version following the process described above, the issue will be automatically closed, no milestone will be set for the issue, you need to check the PR that fixed the issue to see which version it was released in.

However, sometimes maintainers mark issues with specific milestone, which means that the issue is important to become a candidate to take a look when the release is being prepared. Since this is an Open-Source project, where basically all contributors volunteer their time, there is no guarantee that specific issue will be fixed in specific version. We do not want to hold the release because some issue is not fixed, so in such case release manager will reassign such unfixed issues to the next milestone in case they are not fixed in time for the current release. Therefore, the milestone for issue is more of an intent that it should be looked at, than promise it will be fixed in the version.

More context and FAQ about the patchlevel release can be found in the What goes into the next release document in the dev folder of the repository.

Yes! Be sure to abide by the Apache Foundation trademark policies and the Apache Airflow Brandbook. The most up-to-date logos are found in this repo and on the Apache Software Foundation website.

The CI infrastructure for Apache Airflow has been sponsored by: